Theoretical bounds on estimation error for meta-learning

Abstract

Machine learning models have traditionally been developed under the assumption that the training and test distributions match exactly. However, recent success in few-shot learning and related problems are encouraging signs that these models can be adapted to more realistic settings where train and test distributions differ. Unfortunately, there is severely limited theoretical support for these algorithms and little is known about the difficulty of these problems. In this work, we provide novel information-theoretic lower-bounds on minimax rates of convergence for algorithms that are trained on data from multiple sources and tested on novel data. Our bounds depend intuitively on the information shared between sources of data, and characterize the difficulty of learning in this setting for arbitrary algorithms. We demonstrate these bounds on a hierarchical Bayesian model of meta-learning, computing both upper and lower bounds on parameter estimation via maximum-a-posteriori inference.

1 Introduction

Many practical machine learning applications deal with distributional shift from training to testing. One example is few-shot classification (Ravi and Larochelle, 2016; Vinyals et al., 2016), where new classes need to be learned at test time based on only a few examples for each novel class. Recently, few-shot classification has seen increased success; however, theoretical properties of this problem remain poorly understood.

In this paper we analyze the meta-learning setting, where the learner is given access to samples from a set of meta-training distributions, or tasks. At test-time, the learner is exposed to only a small number of samples from some novel task. The meta-learner aims to uncover a useful inductive bias from the original samples, which allows them to learn a new task more efficiently.111Note that this definition encompasses few-shot learning. While some progress has been made towards understanding the generalization performance of specific meta-learning algorithms (Amit and Meir, 2017; Khodak et al., 2019; Bullins et al., 2019; Denevi et al., 2019; Cao et al., 2019), little is known about the difficulty of the meta-learning problem in general. Existing work has studied generalization upper-bounds for novel data distributions (Ben-David et al., 2010; Amit and Meir, 2017), yet to our knowledge, the inherent difficulty of these tasks relative to the i.i.d case has not been characterized.

In this work, we derive novel bounds for meta learners. We first present a general information theoretic lower bound, Theorem 1, that we use to derive bounds in particular settings. Using this result, we derive lower bounds in terms of the number of training tasks, data per training task, and data available in a novel target task. Additionally, we provide a specialized analysis for the case where the space of learning tasks is only partially observed, proving that infinite training tasks or data per training task are insufficient to achieve zero minimax risk (Corollary 2).

We then derive upper and lower bounds for a particular meta-learning setting. In recent work, Grant et al. (2018) recast the popular meta-learning algorithm MAML (Finn et al., 2017) in terms of inference in a Bayesian hierarchical model. Following this, we provide a theoretical analysis of a hierarchical Bayesian model for meta-linear-regression. We compute sample complexity bounds for posterior inference under Empirical Bayes (Robbins, 1956) in this model and compare them to our predicted lower-bounds in the minimax framework. Furthermore, through asymptotic analysis of the error rate of the MAP estimator, we identify crucial features of the meta-learning environment which are necessary for novel task generalization.

Our primary contributions can be summarized as follows:

-

We introduce novel lower bounds on minimax risk of parameter estimation in meta-learning.

-

Through these bounds, we compare the relative utility of samples from meta-training tasks and the novel task and emphasize the importance of the relationship between the tasks.

-

We provide novel upper bounds on the error rate for estimation in a hierarchical meta-linear-regression problem, which we verify through an empirical evaluation.

2 Related work

An early version of this work (Lucas et al., 2019) presented a restricted version of Theorem 1. The current version includes significantly more content, including more general lower bounds and corresponding upper bounds in a hierarchical Bayesian model of meta-learning (Section 5).

Baxter (2000) introduced a formulation for inductive bias learning where the learner is embedded in an environment of multiple tasks. The learner must find a hypothesis space which enables good generalization on average tasks within the environment, using finite samples. In our setting, the learner is not explicitly tasked with finding a reduced hypothesis space but instead learns using a general two-stage approach, which matches the standard meta-learning paradigm (Vilalta and Drissi, 2002). In the first stage an inductive bias is extracted from the data, and in the second stage the learner estimates using data from a novel task distribution. Further, we focus on bounding minimax risk of meta learners. Under minimax risk, an optimal learner achieves minimum error on the hardest learning problem in the environment. While average case risk of meta learners is more commonly studied, recent work has turned attention towards the minimax setting (Kpotufe and Martinet, 2018; Hanneke and Kpotufe, 2019, 2020; Mousavi Kalan et al., 2020; Mehta et al., 2012). The worst-case error in meta-learning is particularly important in safety-critical systems, for example in medical diagnosis.

Mousavi Kalan et al. (2020) study the minimax risk of transfer learning. In their setting, the learner is provided with a large amount of data from a single source task and is tasked with generalizing to a target task with a limited amount of data. They assume relatedness between tasks by imposing closeness in parameter-space (whereas in our setting, we assume closeness in distribution via KL divergence). They prove only lower bounds, but notably generalize beyond the linear setting towards single layer neural networks.

There is a large volume of prior work studying upper-bounds on generalization error in multi-task environments (Ben-David and Borbely, 2008; Ben-David et al., 2010; Pentina and Lampert, 2014; Amit and Meir, 2017; Mehta et al., 2012). While the approaches in these works vary, one common factor is the need to characterize task-relatedness. Broadly, these approaches either assume a shared distribution for sampling tasks (Baxter, 2000; Pentina and Lampert, 2014; Amit and Meir, 2017), or a measure of distance between distributions (Ben-David and Borbely, 2008; Ben-David et al., 2010; Mohri and Medina, 2012). Our lower-bounds utilize a weak form of task relatedness, assuming that the environment contains a finite set that is suitably separated in parameter space but close in KL divergence—this set of assumptions also arises often when computing i.i.d minimax lower bounds (Loh, 2017).

One practical approach to meta-learning is learning a linear mapping on top of a learned feature space. Prototypical Networks (Snell et al., 2017) effectively learn a discriminative embedding function and performs linear classification on top using the novel task data. Analyzing these approaches is challenging due to metric-learning inspired objectives (that require non-i.i.d sampling) and the simultaneous learning of feature mappings and top-level linear functions. Though some progress has been made (Jin et al., 2009; Saunshi et al., 2019; Wang et al., 2019; Du et al., 2020). Maurer (2009), for example, explores linear models fitted over a shared linear feature map in a Hilbert space. Our results can be applied in these settings if a suitable packing of the representation space is defined.

Other approaches to meta-learning aim to parameterize learning algorithms themselves. Traditionally, this has been achieved by hyper-parameter tuning (Rasmussen and Nickisch, 2010; MacKay et al., 2019) but recent fully parameterized optimizers also show promising performance in deep neural network optimization (Andrychowicz et al., 2016), few-shot learning (Ravi and Larochelle, 2016), unsupervised learning (Metz et al., 2019), and reinforcement learning (Duan et al., 2016). Yet another approach learns the initialization of task-specific parameters, that are further adapted through regular gradient descent. Model-Agnostic Meta-Learning (Finn et al., 2017), or MAML, augments the global parameters with a meta-initialization of the weight parameters. Grant et al. (2018) recast MAML in terms of inference in a Bayesian hierarchical model. In Section 5, we consider learning in a hierarchical environment of linear models and provide both lower and upper bounds on the error of estimating the parameters of a novel linear regression problem.

Lower bounding estimation error is a critical component of understanding learning problems (and algorithms). Accordingly, there is a large body of literature producing such lower bounds (Khas’ minskii, 1979; Yang and Barron, 1999; Loh, 2017). We focus on producing lower-bounds for parameter estimation using local packing sets, but expect that extending these results to density estimation or non-parametric estimation is feasible.

3 Novel task environment risk

Most existing theoretical work studying out-of-distribution generalization focuses on providing upper-bounds on generalization performance (Ben-David et al., 2010; Pentina and Lampert, 2014; Amit and Meir, 2017). We begin by instead exploring the converse: what is the best performance we can hope to achieve on any given task in the environment? After introducing notation and minimax risks, we then show how these ideas can be applied, using meta linear regression as an example.

A full reference table for notation can be found in Appendix A and a short summary is given here. We consider algorithms that learn in an environment , with data domain and a space of distributions with support . In the typical i.i.d setting, the algorithm is provided training data , consisting of i.i.d samples from .

In the standard multi-task setting, we sample training data from a set of training tasks . We extend this to a meta-learning, or novel-task setting by first drawing : training data points from the first distributions, for a total of samples. We call this the meta-training set. We then draw a small sample of novel data, called a support set, , from .

Consider a symmetric loss function for non-decreasing and arbitrary metric . We seek to estimate the output of , a functional that maps distributions to a metric space . For example, may describe the coefficient vector of a high-dimensional hyperplane when is a space of linear models, and may be the Euclidean distance.

The i.i.d minimax risk

Before studying the meta-learning setting, we first begin with a definition of the i.i.d minimax risk that measures the worst-case error of the best possible estimator,

| (1) |

For notational convenience, we denote the output of by . The estimator for is denoted, , and maps samples from to an estimate of .

Novel-task minimax risk

In the novel-task setting, we wish to estimate , the parameters of the novel task distribution . We consider two-stage estimators for . In the first stage, the meta-learner uses a learning algorithm , that maps the meta-training set to an estimation algorithm, . In the second stage, the learner computes , the estimate of .

The novel-task minimax risk is given by,

| (2) |

where . This ensures a degree of relatedness between the novel and meta-training tasks.

The estimator for now depends additionally on the samples in , where only samples from are available to the learner. Thus, addresses the domain shift expected at test-time in the meta-learning setting and allows the learner to use data from multiple tasks. The goal of is to learn an inductive bias from such that a good estimate is possible with only data points from . In this setting, is equivalent to the number of shots in few-shot learning.

An example with meta-linear regression

We present here a short summary based on meta linear regression, which we will analyze in more detail in Section 5.

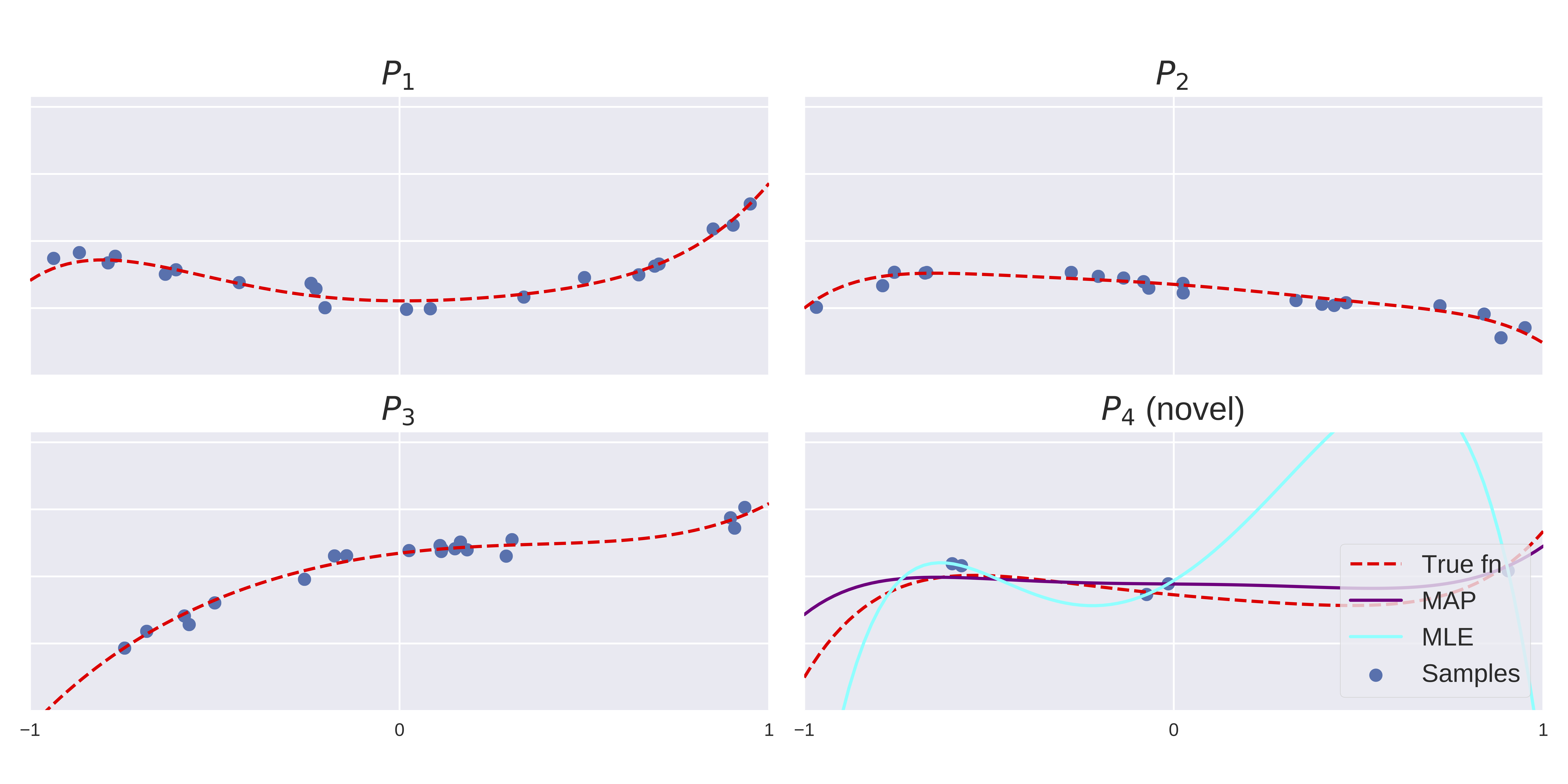

In Figure 1, we show observed data samples from a family of polynomial regression models. Our aim is to output an algorithm which recovers the parameters of a new polynomial function from limited observations–we choose a MAP estimator which is described fully in Section 5. In the bottom right, we are given only 5 data points from a novel task distribution and estimate the parameters of the model with both the MLE and MAP estimators — the MLE overfits the support set while the MAP estimator is close to the true function.

In terms of the terminology used above, the set,

is the space of polynomial regression models, parameterized by . For this problem, we take . In Figure 1, tasks are generated with , for unknown, sparse, . Thus, each model is a polynomial function with few large coefficients. The algorithm , first takes samples from and computes an estimate, . This estimate of is then used to compute . Note that this approach is able to learn the correct inductive bias from the data, without requiring a carefully designed regularizer. The lower bounds we derive in Section 4 can be applied to problems of this general type, and the upper and lower bounds in Section 5 apply specifically to meta-learning linear regression.

4 Information theoretic lower bounds on novel task generalization

In this section, we first present our most general result: Theorem 1. Using this, we derive Corollary 1 that gives a lower bound in terms of the sample size in the training and novel tasks. Corollary 1 recovers a well-known i.i.d lower bound (Theorem 2) when , and, importantly, highlights that the novel task data is significantly more valuable than the training task data. Additionally, we provide a specialized bound that applies when the environment is partially observed — proving that in this setting training task data is insufficient to drive the minimax risk to zero.

In Theorem 1, we assume that contains distinct -separated distributions but only tasks are visible to the learner. Intuitively, the error rate lower-bound shrinks as the amount of information shared between the training tasks and the novel task grows. All proofs are given in Appendix B.1. Recall for non-decreasing and arbitrary metric .

Theorem 1 (Minimax novel task risk lower bound).

Let contain distinct distributions such that and for all . Let be a random ordering of the elements, and be a vector of i.i.d samples from . Further, define to be an matrix whose column consist of i.i.d samples from . Then,

Note that is a property of the so-called packing set, , and may depend on the sample size, , and other properties of . For example, practical instances of this bound typically require or similar, as in Theorem 3 below. To derive this result, we bound the statistical estimation error by the error on a corresponding decoding problem where we must predict the novel task index, given the meta-training set and . Fano’s inequality provides best-case error rates for this problem.

Using Theorem 1, we derive our first bound on the novel-task minimax risk that depends on the number of meta-training tasks () and datapoints per training task (, ), via a local-packing argument. The following corollary implies that if we have meta-training tasks in our -packing that are close (in terms of their pairwise KL distance), then learning a novel task from training samples drawn from the meta-training tasks requires significantly more examples; in particular, learning the novel task from samples drawn from the meta training set requires times the sample complexity of the novel task. This matches our intuition that learning the novel task implies the ability to distinguish it from all well-separated meta-training tasks.

Corollary 1.

Assume the same setting as in Theorem 1. Then,

A tighter bound on partially observed environments

We now consider the special case of Theorem 1 when , meaning that the meta-training tasks cannot cover the full packing set. In this setting, we prove that no algorithm can generalize perfectly to tasks in unseen regions of the space with small , regardless of the number of data points observed in each meta-training task.

Corollary 2.

Assume the same setting as in Theorem 1, with . Then,

In this work, we have focused on the setting where contains an equal number of samples from each of the meta-training tasks — this is the sampling scheme shown in Figure 2. However, it is possible to extend these results to different sampling schemes for . For example, in the appendix we derive bounds with as a mixture distribution. Surprisingly, despite task identity being hidden from the learner, the asymptotic rate for these two sampling schemes match.

4.1 Measuring Task-Relatedness

The use of local packing requires the design of an appropriate set of distributions whose corresponding parameters are -separated but maintain small KL divergences. In the multi-task setting such an assumption is intuitively reasonable: challenging tasks should require separated parameters for ideal explanations (-separated) but should satisfy some relatedness measure (small KL). Importantly, these parameters can depend on sample size and other problem-specific variables. As we will see shortly, lower bounds on minimax risk in the i.i.d setting may also assume the same notion of relatedness for the local-packing in .

Task relatedness is a necessary feature for upper-bounds on novel task generalization, but are typically difficult to define (see e.g. Ben-David and Borbely (2008)). Our lower bounds utilize a relatively weak notion of task-relatedness, and thus may be overly pessimistic compared to the upper bounds computed in existing work. However, task relatedness of this form can be formulated in a representation space shared across tasks and thus can be applied in settings like those explored by e.g. Du et al. (2020). Deriving lower bounds under the different task relatedness assumptions present in the literature would make for exciting future work.

4.2 Comparison to risk of i.i.d learners

From the statement of Theorem 1 it is not clear how this lower-bound compares to that of the i.i.d learner which has access only to the samples from . To investigate the benefit of additional meta-training tasks, we compare our derived minimax risk lower bounds to those achieved by i.i.d learners. To do so, we revisit standard minimax lower bounds that can be found in e.g. Loh (2017).

Theorem 2 (IID minimax lower-bound).

Suppose satisfy for all . Then,

We include a proof of this result in Appendix B.1, using local-packing as in our meta-learning bounds. As hoped, Corollary 1 recovers Theorem 2 when there are no training tasks available. Moreover, this i.i.d bound is strictly larger than the one computed in Corollary 1 in general. Note that while this i.i.d minimax risk is asymptotically tight for several learning problems (Loh, 2017; Raskutti et al., 2011), there is no immediate guarantee that the same is true for our meta-learning minimax bounds. We investigate the quality of these bounds by providing comparable upper bounds in the next section.

5 Analysis of a hierarchical Bayesian model of meta-learning

Our goal is to analyze the sample complexity of meta-learning for linear regression, where samples are drawn from multiple meta-training tasks and we want to generalize to a new task with only a few data points. After introducing the setting, we will compute lower-bounds on the minimax risk using our results from Section 4, revealing a scaling on the meta-training sample complexity. Following the lower bound, we derive an accompanying upper-bound on the risk of a MAP estimator, derived from an empirical Bayes estimate over a hierarchical Bayesian model. Asymptotic analysis of this bound reveals that if the observed samples from the novel task vary considerably more than the task parameters, then observing more meta-training samples may significantly improve convergence in the small regime. This is validated empirically in Section 6.

For , where is the total number of tasks, we define,

Each task has some design matrix and unknown parameters . For simplicity, we assume known isotropic noise models and that for all , with .

Our meta learner will fit the data using an empirical Bayes estimate in a hierarchical Bayesian model:

We will consider the Maximum a Posterior estimator,

and will characterize its risk, , where the expectation is with respect to sampled data only. The posterior distribution under the Empirical Bayes estimate for is given in Appendix C.2. The derivation is standard but dense and we recommend dedicated readers to consult Gelman et al. (2013), or an equivalent text, for more details.

5.1 Minimax lower bounds

We now compute lower bounds for parameter estimation with meta-learning over multiple linear regression tasks. Beginning with a definition of the space of data generating distributions,

where are the parameters to be learned, and is the design matrix of each linear regression task in the environment. We write , which we assume is bounded for all and (an assumption that is validated for random Gaussian matrices by Raskutti et al. (2011)).

Theorem 3 (Meta linear regression lower bound).

Consider defined as above and let . If and , then,

The proof is given in Appendix B.5. We see that the size of the meta-training set has an inverse exponential scaling in the dimension, . This reflects the complexity of the space growing exponentially in dimensions and the need for a matching growth in data size to cover the environment sufficiently.

5.2 Minimax upper bounds

To compute upper bounds on the estimation error, we require an additional assumption. Namely, we will assume that the design matrices also have bounded minimum singular values, (see Raskutti et al. (2011) for some justification). For the upper-bounds, we allow the bounds on the singular values of the design matrices and the observation noise in the novel task to be different than those in the meta-training tasks. We note that we can still recover the setting assumed in the lower bounds, where all tasks match on these parameters, as a special case.

The learner observes data points from each linear regression model in . We then bound the error of estimating , for which samples are available.

The expected error rate of the MAP estimator can be decomposed as the posterior variance and bias squared. In the appendix we provide a detailed derivation of these results. The bound depends on dimensionality , the observation noise in each task , the number of tasks , the number of data points in each meta-training task , and the number of data points in the novel task .

Theorem 4 (Meta Linear Regression Upper Bound).

Let be the maximum-a-posteriori estimator, . Then,

where,

Expectations are taken over the data conditioned on . Additional terms not depending on are defined in Appendix C.2.

While the bounds presented in Theorem 4 are relatively complicated, we can probe the asymptotic convergence of the MAP estimator to the true task parameters, . In the following section, we will discuss some of the consequences of this result and its implications for our lower bounds.

5.3 Asymptotic behavior of the MAP estimator

We first notice that when is small, the risk cannot be reduced to zero by adding more meta-training data. Recent work has suggested such a relationship may be inevitable (Hanneke and Kpotufe, 2020). Our lower bound presented in Corollary 2 agrees that more samples from a small number of meta-training tasks will not reduce the error to zero. However, unlike our lower bounds based on local packing, the lower bounds presented in this section predict that if the meta-training tasks cover the space sufficiently then an optimal algorithm might hope to reduce the error entirely with enough samples. We hypothesize that this gap is due to limitations in the standard proof techniques we utilize for the lower-bounds when the number of tasks grows, and expect a sharper bound may be possible.

To emulate the few-shot learning setting where is relatively small, we consider , with and fixed. In this case, the risk is bounded as,

where , is the ratio of the observation noise to the variance in sampling , and is the condition number of the design matrices. This leads to a key takeaway: if the observed samples from vary considerably more than the parameters , then observing more samples in will significantly improve convergence towards the true parameters in the small regime. Further, adding more tasks (increasing ) also improves these constant factors by removing the dependence on the condition number, .

6 Empirical Investigations

In this section, we provide additional quantitative exploration of the upper bound studied in Section 5. The aim is to take steps towards relating the bounds to experimental results; we know of little theoretical work in meta-learning that attempt to relate their results to practical empirical datasets. Full details of the experiments in this section can be found in Appendix D.

6.1 Hierarchical Bayes polynomial regression

We first focus on the setting of polynomial regression over inputs in the range . Some examples of these functions and samples are presented in Figure 1, alongside the MAP and MLE estimates for the novel task.

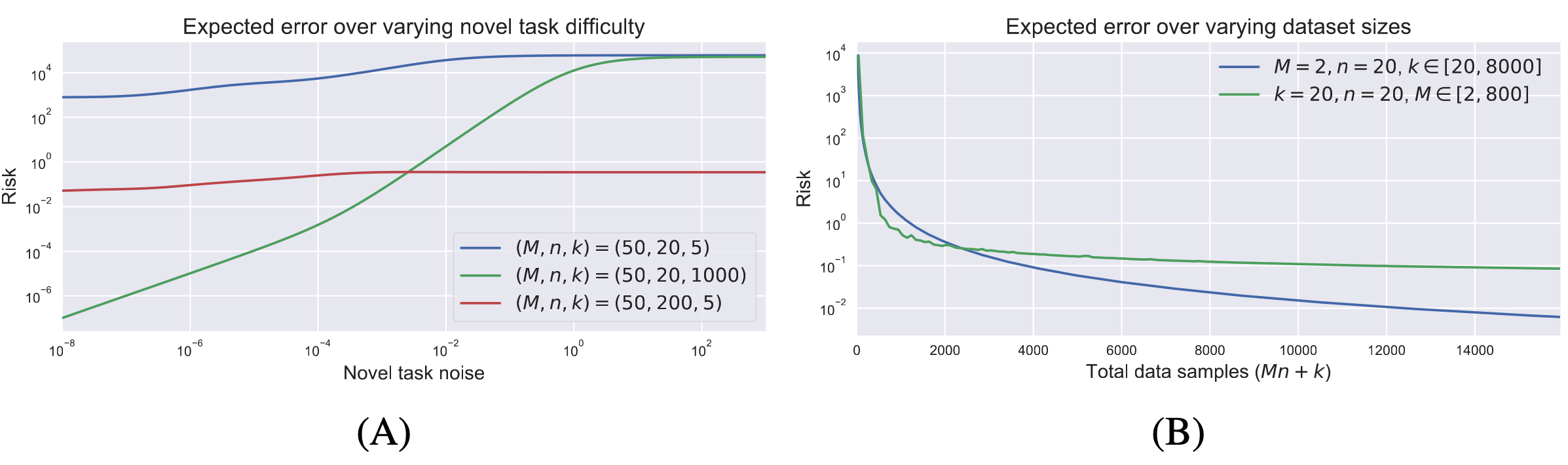

Figure 2 shows the analytical expected error rate (risk) under various environment settings. We observe that even in this simple hierarchical model, the estimator exhibits complex behavior that is correctly predicted by Theorem 4. In Figure 2A, we varied the novel task difficulty by increasing the novel task observation noise (). We plot three curves for three different dataset size configurations. When the novel task is much noisier than the source tasks, it is greatly beneficial to add more meta-training data (blue vs. red). And while larger made little difference when the novel task was relatively difficult (blue vs. green), the expected loss was orders of magnitude lower when the novel task became easier. In Figure 2B, we fixed the relative task difficulty and instead varied and . The x-axis now indicates the total data available to the learner. We observed that adding more tasks has a large effect in the low-data regime but, as predicted, the error has a non-zero asymptotic lower-bound — eventually it is more beneficial to add more novel-task data samples.

These empirical simulations verify that our theoretical analysis is predictive of the behavior of this meta learning algorithm, as each of these observations can be inferred from Theorem 4. While this model is simple, it captures key features of and provides insight into the more general meta-learning problem. We also explored a non-linear sinusoid meta-regression problem (Finn et al., 2017), finding that our theory is largely predictive of the general trends in this setting too.

6.2 Sinusoid regression with MAML

Following the connections between MAML and hierarchical Bayes explored by Grant et al. (2018), we also explored regression on sinusoids using MAML. Our aim was to investigate how predictive our linear theory is for this highly non-linear problem setting. As in Finn et al. (2017), we sample sinusoid functions by placing a prior over the amplitude and phase. In other works (Finn et al., 2017; Grant et al., 2018) the same prior is used for the training and testing stages. However, to better measure generalization to novel tasks we use different prior distributions when training versus evaluating the model.

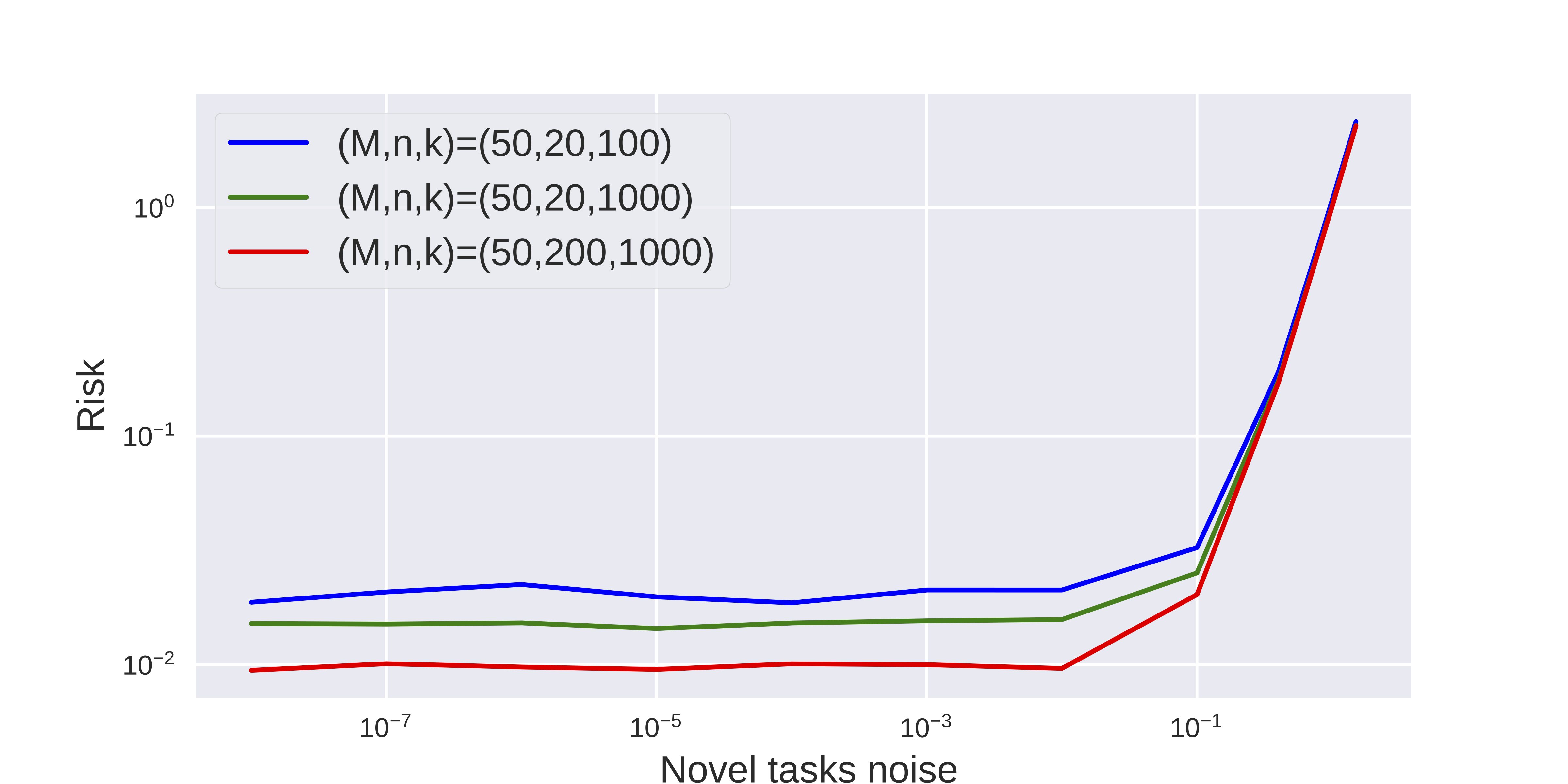

We display the risk averaged over 30 trials in Figure 3. We varied the novel task difficulty by increasing the observation noise in the novel task. We plot separate curves for different dataset size configurations, and observe that the empirical results align fairly well with the results derived by sampling the hierarchical model (Figure 2A). Adding more meta-training data (increasing ) is beneficial (green vs. yellow) and adding more test data-points (higher ) is also beneficial (red vs. green). Here however, these relationships did not interact with the task difficulty, as the wins for increased meta-training and meta-testing data were consistent, until task noise prevents any setting of the model from performing the task.

7 Conclusion

Meta-learning algorithms identify the inductive bias from source tasks and make models more adaptive towards unseen novel distribution. In this paper, we take initial steps towards characterizing the difficulty of meta-learning and understanding how these limitations present in practice. We have derived both lower bounds and upper bounds on the error of meta-learners, which are particularly relevant in the few-shot learning setting where is small. Our bounds capture key features of the meta-learning problem, such as the effect of increasing the number of shots or training tasks. We have also identified a gap between our lower and upper bounds when there are a large number of training tasks, which we hypothesize is a limitation of the proof technique that we applied to derive the lower bounds — suggesting an exciting direction for future research.

8 Acknowledgements

This work benefited greatly from the input of many other researchers. In particular, we extend our thanks to Shai Ben-David, Karolina Dziugaite, Samory Kpotufe, and Daniel Roy for discussions and feedback on the results presented in this work. We thank Ahmad Beirami and anonymous reviewers for their valuable feedback that led to significant improvements to this paper. We also thank Elliot Creager, Will Grathwohl, Mufan Li, and many of our other colleagues at the Vector Institute for feedback that greatly improved the presentation of this work. Resources used in preparing this research were provided, in part, by the Province of Ontario, the Government of Canada through CIFAR, and companies sponsoring the Vector Institute (www.vectorinstitute.ai/partners).

References

- Meta-learning by adjusting priors based on extended PAC-bayes theory. arXiv preprint arXiv:1711.01244. Cited by: §1, §2, §3.

- Learning to learn by gradient descent by gradient descent. In Advances in Neural Information Processing Systems 29, pp. 3981–3989. Cited by: §2.

- A model of inductive bias learning. Journal of artificial intelligence research 12, pp. 149–198. Cited by: §2, §2.

- A theory of learning from different domains. Machine learning 79 (1-2), pp. 151–175. Cited by: §1, §2, §3.

- A notion of task relatedness yielding provable multiple-task learning guarantees. Machine learning 73 (3), pp. 273–287. Cited by: §2, §4.1.

- Generalize across tasks: efficient algorithms for linear representation learning. In Proceedings of the 30th International Conference on Algorithmic Learning Theory, A. Garivier and S. Kale (Eds.), Proceedings of Machine Learning Research, Vol. 98, Chicago, Illinois, pp. 235–246. External Links: Link Cited by: §1.

- A theoretical analysis of the number of shots in few-shot learning. arXiv preprint arXiv:1909.11722. Cited by: §1.

- Elements of information theory. John Wiley & Sons. Cited by: Lemma 1.

- Learning-to-learn stochastic gradient descent with biased regularization. In Proceedings of the 36th International Conference on Machine Learning, K. Chaudhuri and R. Salakhutdinov (Eds.), Proceedings of Machine Learning Research, Vol. 97, Long Beach, California, USA, pp. 1566–1575. Cited by: §1.

- Few-shot learning via learning the representation, provably. arXiv preprint arXiv:2002.09434. Cited by: §2, §4.1.

- RL$^2$: fast reinforcement learning via slow reinforcement learning. CoRR abs/1611.02779. External Links: 1611.02779 Cited by: §2.

- Transmission of information. A Statistical Theory of Communication. Cited by: Lemma 1.

- Model-agnostic meta-learning for fast adaptation of deep networks. In Proceedings of the 34th International Conference on Machine Learning-Volume 70, pp. 1126–1135. Cited by: §D.2, §1, §2, §6.1, §6.2.

- Bayesian data analysis. Chapman and Hall/CRC. Cited by: §5.

- Recasting gradient-based meta-learning as hierarchical Bayes. arXiv preprint arXiv:1801.08930. Cited by: §1, §2, §6.2.

- On the value of target data in transfer learning. In Advances in Neural Information Processing Systems 32, pp. 9871–9881. Cited by: §2.

- A no-free-lunch theorem for multitask learning. arXiv preprint arXiv:2006.15785. Cited by: §2, §5.3.

- Regularized distance metric learning:theory and algorithm. In Advances in Neural Information Processing Systems 22, pp. 862–870. Cited by: §2.

- A lower bound on the risks of non-parametric estimates of densities in the uniform metric. Theory of Probability & Its Applications 23 (4), pp. 794–798. Cited by: §2, Lemma 2.

- Provable guarantees for gradient-based meta-learning. arXiv preprint arXiv:1902.10644. Cited by: §1.

- Marginal singularity, and the benefits of labels in covariate-shift. In Proceedings of the 31st Conference On Learning Theory, S. Bubeck, V. Perchet, and P. Rigollet (Eds.), Proceedings of Machine Learning Research, Vol. 75, , pp. 1882–1886. Cited by: §2.

- On lower bounds for statistical learning theory. Entropy 19 (11), pp. 617. Cited by: §2, §2, §4.2, §4.2, Lemma 3.

- Information-theoretic limitations on novel task generalization. Neurips 2019 Workshop on Machine Learning with Guarantees. Cited by: §2.

- Self-tuning networks: bilevel optimization of hyperparameters using structured best-response functions. In 7th International Conference on Learning Representations, Cited by: §2.

- Transfer bounds for linear feature learning. Machine learning 75 (3), pp. 327–350. Cited by: §2.

- Minimax multi-task learning and a generalized loss-compositional paradigm for mtl. Advances in Neural Information Processing Systems 25, pp. 2150–2158. Cited by: §2, §2.

- Meta-learning update rules for unsupervised representation learning. In 7th International Conference on Learning Representations, Cited by: §2.

- New analysis and algorithm for learning with drifting distributions. In International Conference on Algorithmic Learning Theory, pp. 124–138. Cited by: §2.

- Minimax lower bounds for transfer learning with linear and one-hidden layer neural networks. In Advances in Neural Information Processing Systems, H. Larochelle, M. Ranzato, R. Hadsell, M. F. Balcan, and H. Lin (Eds.), Vol. 33, pp. 1959–1969. External Links: Link Cited by: §2, §2.

- A PAC-bayesian bound for lifelong learning. In International Conference on Machine Learning, pp. 991–999. Cited by: §2, §3.

- Minimax rates of estimation for high-dimensional linear regression over -balls. IEEE transactions on information theory 57 (10), pp. 6976–6994. Cited by: §4.2, §5.1, §5.2.

- Gaussian processes for machine learning (GPML) toolbox. J. Mach. Learn. Res. 11, pp. 3011–3015. Cited by: §2.

- Optimization as a model for few-shot learning. International Conference on Learning Representations. Cited by: §1, §2.

- An empirical bayes approach to statistics. In Proceedings of the Third Berkeley Symposium on Mathematical Statistics and Probability, Volume 1: Contributions to the Theory of Statistics, Berkeley, Calif., pp. 157–163. Cited by: §1.

- A theoretical analysis of contrastive unsupervised representation learning. In International Conference on Machine Learning, pp. 5628–5637. Cited by: §2.

- Prototypical networks for few-shot learning. In Advances in Neural Information Processing Systems, pp. 4077–4087. Cited by: §2.

- A perspective view and survey of meta-learning. Artificial intelligence review 18 (2), pp. 77–95. Cited by: §2.

- Matching networks for one shot learning. In Advances in neural information processing systems, pp. 3630–3638. Cited by: §1.

- Some matrix-inequalities and metrization of matric space. Cited by: Lemma 8.

- Multitask metric learning: theory and algorithm. In Proceedings of Machine Learning Research, K. Chaudhuri and M. Sugiyama (Eds.), Proceedings of Machine Learning Research, Vol. 89, , pp. 3362–3371. Cited by: §2.

- Information-theoretic determination of minimax rates of convergence. Annals of Statistics, pp. 1564–1599. Cited by: §2.

Appendix A Notation

| Description | |

|---|---|

| The domain of the data, e.g. | |

| The range of the data, e.g. | |

| The product space | |

| A collection of distributions over | |

| A (finite) subset of distributions in | |

| An element of | |

| The product distribution, whose samples correspond to independent draws from | |

| The product distribution, , for | |

| The mixture distribution, , for | |

| A metric space, containing parameters for each distribution | |

| A functional, mapping distributions in to parameters in | |

| An estimator | |

| The mutual information between random variables and . | |

| Denotes training datasets drawn i.i.d from some . Typically , | |

| Denotes a meta-training set drawn i.i.d from | |

| , for | Indicates the set |

| The -norm ball of radius , centered at . |

Appendix B Lower Bound Proofs

We will make use of several standard results below, which we present here.

Lemma 1.

Lemma 2.

Mutual information equality (Khas’ minskii, 1979) Consider random variables , then,

Lemma 3.

Local packing lemma (Loh, 2017) Consider distributions . Let be a random variable distributed uniformly on and let be a vector of i.i.d samples from . Then,

We will require a novel local packing bound for the novel-task risk, which we present in Lemma 5.

b.1 IID Lower Bound

We first prove the i.i.d result, which will serve as a guide for our novel lower bounds.

See 2

Proof.

First, notice that,

Now define the decision rule,

with ties broken arbitrarily. We proceed by bounding the expected loss. First, using Markov’s inequality,

Next, consider the case . Through the triangle inequality,

Thus, the probability that the distance is less than is at least as large as the probability that the estimator is correct.

Now, using Fano’s inequality with , and (and the corresponding Markov chain

), we have,

Combining the above inequalities with the Local Packing Lemma gives the final result. ∎

b.2 Proof of Theorem 1

See 1

Proof.

As in the i.i.d case, we first bound the supremum from below with an average,

where the inner sum is over all length orderings, with .

As before, we consider the following estimator,

Using Markov’s inequality, and then following the proof of Theorem 2, we have,

with the use of Fano’s inequality, we arrive at,

Conditioned on , each element of and are independent but they are not identically distributed. Thus, with the application of Lemma 2,

The result follows by combining these inequalities. ∎

Remark

In the above proof of Theorem 1, we did not need to make use of the form of the distribution of , only that the correct graph structure was observed. This grants us some flexibility, which we utilize later in Section B.4 to prove lower bounds for mixture distributions.

We now proceed with proofs of the corollaries of Section 4.

b.3 Local packing results

Lemma 4 (Meta-learning local packing).

Consider the same setting as in Theorem 1, then

Proof.

There are orderings on the first indices, given the . We introduce the following notation,

As in previous proofs, we notice that we can write,

First, note that we can upper bound , where denotes a single row in . Further,

We will use convexity of the KL divergence to upper bound this quantity. Each distribution is an average over a random selection of index orderings.

When applying convexity, all pairs of selections that exactly match will lead to a KL divergence of zero. There are the same number of these in each component of . Thus we care only about selections that contain either or such that matching pairs of distributions exactly is not possible. Further, we need only consider pairs of product distributions who differ only in a single, identical position.

Each of the above described pairs of distributions has KL divergence equal to . We conclude by counting the total number of orderings producing such pairs. First, there are choices for the index of and . Then, there are total orderings of the remaining elements. Thus, we have,

∎

These results together provide an immediate proof of Corollary 1.

See 1

Proof.

Putting together the results of Theorem 1, Lemma 3, and Lemma 4, and using the fact that , the result follows immediately. ∎

See 2

Proof.

This result follows as an application of the data processing inequality. Notice that forms a Markov chain. Thus,

by the data processing inequality. We can compute in closed form:

The proof is completed by plugging in the i.i.d local packing bound alongside the above. ∎

b.4 Bounds using mixture distributions

In this section we introduce tools to lower bound the minimax risk when the meta-training set is sampled from a mixture over the meta-training tasks, . We note first that Theorem 1 can be reproduced exactly when . Thus, we need only provide a local packing bound for the mixture distribution. In Lemma 5 we provide such a lower bound for the special case where , so that data is sampled from a mixture over the entire environment.

Lemma 5 (Leave-one-task-out mixture local packing).

Let contain distinct distributions such that for all and let . Let be a random ordering of the elements, and define to be a vector of i.i.d samples from . Then,

Proof.

From Lemma 3 (and some simple arithmetic) we have,

Note that by the definition of the mixture distribution,

Using the convexity of the KL divergence,

Noting for the last step that the KL is zero if and only if the distributions are the same almost everywhere. ∎

b.5 Proof of Hierarchical linear model lower bound

Recall that the space of distributions we consider is given by,

See 3

Proof.

The proof consists of two steps, we first construct a -packing of . Then, we upper bound the KL divergence between two distributions in this packing and use Corollary 1 to give the desired bound.

The maximal packing number for the unit 2-norm ball can be bounded by the following,

We use a common scaling trick. First, through this bound, we can build a packing set, , with packing radius , giving . We define a new packing set of the same cardinality by taking for all (requiring ). Giving for all ,

similarly, .

We now proceed with bounding the KL divergences.

where . We write and , then,

The second line is derived using the Cauchy-Schwarz inequality, and the final inequality uses . We will not proceed by invoking Corollary 1 on this packing set. This will require choosing to achieve our desired rate and will in turn impose constraints on the problem dimensions to ensure the packing is valid.

Now, using Corollary 1,

Choosing gives,

for . To enforce , we further require that,

Additionally, we may only consider packing sets with KL divergence no more than , hence we also require that,

Thus,

∎

Appendix C Hierarchical Bayesian Linear Regression Upper Bounds

c.1 Some useful linear algebra results

Let denotes the maximum singular value of ; denotes the minimum singular value of .

Lemma 6.

Singular value of sum of two matrices Let , then . Furthermore, if are positive definite, .

Proof.

The first result follows immediately from the triangle inequality of the matrix norm .

For the second result, suppose that and are positive definite.

| (3) | ||||

| (4) | ||||

| (5) | ||||

| (6) |

Now, notice that is similar to the matrix , which exists as is positive definite. This matrix is itself positive definite, and thus has non-negative eigenvalues, meaning also has all positive eigenvalues. Thus, , for all , and,

| (8) | |||||

| (9) | |||||

| (10) | |||||

| (11) | |||||

∎

Lemma 7.

Singular value of product of two matrices Let , then , and, .

First we prove the maximum singular value.

Proof.

| (12) | ||||

| (13) | ||||

| (14) | ||||

| (15) | ||||

| (16) | ||||

| (17) |

The minimum singular value follows a similar structure. Suppose is full rank,

| (18) | ||||

| (19) | ||||

| (20) | ||||

| (21) | ||||

| (22) | ||||

| (23) |

If is not full rank, then . ∎

Lemma 8.

(Von Neumann’s Trace Inequality (Von Neumann, 1937)) Given two complex matrices , with singular vales and respectively. We have,

This is a classic result whose proof we exclude.

As a direct consequence of Lemma 8, .

c.2 Posterior estimate

For reference, we reproduce the posterior estimate for the true parameters . As a shorthand, we write .

| (24) | ||||

| (25) | ||||

| (26) |

| (27) | ||||

| (28) | ||||

| (29) | ||||

| (30) |

c.3 Upper bound for meta linear regression

Before proceeding with the proof, we introduce some additional notation and technical results.

Additional notation

To alleviate (only a little of) the notational clutter, we will define the following quantities,

-

-

.

-

-

-

-

-

-

-

-

-

-

-

-

As we have uniform bounds on the singular values of all design matrices, we introduced an auxillary matrix whose largest and smallest singular values are given by and respectively.

We will also write , and throughout.

Bias-Variance Decomposition

As is standard, we can decompose the risk into the bias and variance of the estimator:

| (31) | ||||

| (32) | ||||

| (33) |

In the next two sections, we will derive upper bounds on the bias and variance terms above.

c.3.1 Variance technical lemmas

We first decompose the variance into contributions from two sources: the variance from data in the novel task and the variance from data in the source tasks.

Lemma 9.

(Variance decomposition) Let as defined above. Then the variance of the estimator can be written as

Proof.

| (34) | ||||

| (35) | ||||

| (36) | ||||

| (37) |

∎

We will now work towards a bound for each of the two variance terms in Lemma 9 separately. To do so, we will need to produce bounds on the singular values of terms appearing in Lemma 9.

We begin with the covariance term .

Lemma 10.

(Novel task covariance singular value bound) Let , and be as defined above. Then,

Proof.

Using Lemma 6, we can bound as follows,

| (38) | ||||

| (39) | ||||

| (40) | ||||

| (41) | ||||

| (42) |

Now, using the auxillary matrix ,

| (43) | ||||

| (44) | ||||

| (45) | ||||

| (46) |

Next, we deal with terms appearing corresponding to the data from the source tasks.

Lemma 11.

(Source tasks covariance singular value bound) Let , and write and . Then,

and,

Proof.

In Lemma 11, we introduced additional condition numbers, which we can bound as follows,

| (69) | ||||

| (70) |

c.3.2 Variance upper bound

We are now ready to put the above technical results together to achieve a bound on the variance of the estimator.

Lemma 12.

(Variance bound)

Proof.

First, by Lemma 9 we can decompose the overall variance into two terms:

We deal with the left term first.

Using trace permutation invariance and the von Neumann trace inequality (Lemma 8). We can upper bound the left variance term as follows,

| (71) | ||||

| (72) | ||||

| (73) |

For the second variance term, we observe that,

| (74) | |||

| (75) | |||

| (76) | |||

| (77) | |||

| (78) |

Using Lemma 11, we have,

| (79) | ||||

| (80) | ||||

| (81) | ||||

| (82) | ||||

| (83) | ||||

| (84) |

Finally, rearranging and using Lemma 10, we can bound the sum of the two terms in the variance as follows,

| (85) | ||||

| (86) | ||||

| (87) | ||||

| (88) | ||||

| (89) |

∎

c.3.3 Bounding the Bias

Lemma 13.

(Bias upper bound) Given , we have,

Proof.

The bias can be computed as follows,

| (90) | ||||

| (91) | ||||

| (92) | ||||

| (93) | ||||

| (94) | ||||

| (95) | ||||

| (96) | ||||

| (97) |

where we wrote , and . Thus,

We can bound each term in turn. First, note that , and we have bounded above. We can write,

The last line follows from the fact that the parameters lie in a ball of unit radius. We now proceed by bounding the sum by times the supremum — with some light abuse of notation,

Thus, overall the convergence of the bias is bounded by,

∎

Appendix D Additional Experiment Details

d.1 Hierarchical Bayes Evaluation

We sample linear models according to the hierarchical model in Section 5, with design matrices constructed by uniformly sampling points, , and storing the vector , for in each row of .

To produce the plots in Figure 2 we computed the average loss over 100 random draws of the training data and labels from the same set of fixed values. The values were sampled once from the hierarchical model with , and

Code to reproduce these plots is provided in the supplementary materials with our submission.

d.2 Sinusoid Regression with MAML

| Hyper parameters | Description |

|---|---|

| noise at test time. | |

| M | number of tasks at the training tasks |

| number of tasks at the testing tasks | |

| eps_per_batch | episode per batch |

| train_ampl_range | range of amplitude at training |

| train_phase_range | range of phase at training |

| val_ampl_range | range of amplitude at testing |

| val_phase_range | range of phase at testing |

| inner_steps | number of steps of Maml |

| inner_lr | learning rate used to optimize parameter of the model |

| meta_lr | used to optimize parameter of the meta-learner |

| n | number of datapoints at training tasks(support set) |

| k | number of datapoints at testing tasks (support set) |

| number of datapoints at training tasks (query set) . | |

| number of datapoints at testing tasks (query set). |

For all of these experiments we used a fully connected network with 6 layers and 40 hidden units per layer. The network is trained using the MAML algorithm (Finn et al., 2017) with 5 inner steps using SGD with an inner learning rate of . We used Adam for the outer loop learning with a learning rate of .

Expected error was computed after 500 epochs of optimization and was averaged over 30 runs. We produced our results through a comprehensive grid search over 72 combinations of the settings below and it required around 30 minutes to produce the output of each setting, using a system with 1 gpu and 3 cpus. This experiment therefore lasted 20 hours in total.

, , ,

,

,

eps_per_batch = ,

train_ampl_range = ,

train_phase_range = ,

val_ampl_range = ,

val_phase_range = ,

inner_steps = ,

inner_lr = ,

meta_lr = .