Incremental Few-Shot Learning with Attention Attractor Networks

Abstract

Machine learning classifiers are often trained to recognize a set of pre-defined classes. However, in many applications, it is often desirable to have the flexibility of learning additional concepts, with limited data and without re-training on the full training set. This paper addresses this problem, incremental few-shot learning, where a regular classification network has already been trained to recognize a set of base classes, and several extra novel classes are being considered, each with only a few labeled examples. After learning the novel classes, the model is then evaluated on the overall classification performance on both base and novel classes. To this end, we propose a meta-learning model, the Attention Attractor Network, which regularizes the learning of novel classes. In each episode, we train a set of new weights to recognize novel classes until they converge, and we show that the technique of recurrent back-propagation can back-propagate through the optimization process and facilitate the learning of these parameters. We demonstrate that the learned attractor network can help recognize novel classes while remembering old classes without the need to review the original training set, outperforming various baselines.

1 Introduction

The availability of large scale datasets with detailed annotation, such as ImageNet (Russakovsky et al., 2015), played a significant role in the recent success of deep learning. The need for such a large dataset is however a limitation, since its collection requires intensive human labor. This is also strikingly different from human learning, where new concepts can be learned from very few examples. One line of work that attempts to bridge this gap is few-shot learning (Koch et al., 2015; Vinyals et al., 2016; Snell et al., 2017), where a model learns to output a classifier given only a few labeled examples of the unseen classes. While this is a promising line of work, its practical usability is a concern, because few-shot models only focus on learning novel classes, ignoring the fact that many common classes are readily available in large datasets.

An approach that aims to enjoy the best of both worlds, the ability to learn from large datasets for common classes with the flexibility of few-shot learning for others, is incremental few-shot learning (Gidaris and Komodakis, 2018). This combines incremental learning where we want to add new classes without catastrophic forgetting (McCloskey and Cohen, 1989), with few-shot learning when the new classes, unlike the base classes, only have a small amount of examples. One use case to illustrate the problem is a visual aid system. Most objects of interest are common to all users, e.g., cars, pedestrian signals; however, users would also like to augment the system with additional personalized items or important landmarks in their area. Such a system needs to be able to learn new classes from few examples, without harming the performance on the original classes and typically without access to the dataset used to train the original classes.

In this work we present a novel method for incremental few-shot learning where during meta-learning we optimize a regularizer that reduces catastrophic forgetting from the incremental few-shot learning. Our proposed regularizer is inspired by attractor networks (Zemel and Mozer, 2001) and can be thought of as a memory of the base classes, adapted to the new classes. We also show how this regularizer can be optimized, using recurrent back-propagation (Liao et al., 2018; Almeida, 1987; Pineda, 1987) to back-propagate through the few-shot optimization stage. Finally, we show empirically that our proposed method can produce state-of-the-art results in incremental few-shot learning on mini-ImageNet (Vinyals et al., 2016) and tiered-ImageNet (Ren et al., 2018) tasks.

2 Related Work

Recently, there has been a surge in interest in few-shot learning (Koch et al., 2015; Vinyals et al., 2016; Snell et al., 2017; Lake et al., 2011), where a model for novel classes is learned with only a few labeled examples. One family of approaches for few-shot learning, including Deep Siamese Networks (Koch et al., 2015), Matching Networks (Vinyals et al., 2016) and Prototypical Networks (Snell et al., 2017), follows the line of metric learning. In particular, these approaches use deep neural networks to learn a function that maps the input space to the embedding space where examples belonging to the same category are close and those belonging to different categories are far apart. Recently, Garcia and Bruna (2018) propose a graph neural networks based method which captures the information propagation from the labeled support set to the query set. Ren et al. (2018) extend Prototypical Networks to leverage unlabeled examples while doing few-shot learning. Despite their simplicity, these methods are very effective and often competitive with the state-of-the-art.

Another class of approaches aims to learn models which can adapt to the episodic tasks. In particular, Ravi and Larochelle (2017) treat the long short-term memory (LSTM) as a meta learner such that it can learn to predict the parameter update of a base learner, e.g., a convolutional neural network (CNN). MAML (Finn et al., 2017) instead learns the hyperparameters or the initial parameters of the base learner by back-propagating through the gradient descent steps. Santoro et al. (2016) use a read/write augmented memory, and Mishra et al. (2018) combine soft attention with temporal convolutions which enables retrieval of information from past episodes.

Methods described above belong to the general class of meta-learning models. First proposed in Schmidhuber (1987); Naik and Mammone (1992); Thrun (1998), meta-learning is a machine learning paradigm where the meta-learner tries to improve the base learner using the learning experiences from multiple tasks. Meta-learning methods typically learn the update policy yet lack an overall learning objective in the few-shot episodes. Furthermore, they could potentially suffer from short-horizon bias (Wu et al., 2018), if at test time the model is trained for longer steps. To address this problem, Bertinetto et al. (2018) propose to use fast convergent models like logistic regression (LR), which can be back-propagated via a closed form update rule. Compared to Bertinetto et al. (2018), our proposed method using recurrent back-propagation (Liao et al., 2018; Almeida, 1987; Pineda, 1987) is more general as it does not require a closed-form update, and the inner loop solver can employ any existing continuous optimizers.

Our work is also related to incremental learning, a setting where information is arriving continuously while prior knowledge needs to be transferred. A key challenge is catastrophic forgetting (McCloskey and Cohen, 1989; McClelland et al., 1995), i.e., the model forgets the learned knowledge. Various memory-based models have since been proposed, which store training examples explicitly (Rebuffi et al., 2017; Sprechmann et al., 2018; Castro et al., 2018; Nguyen et al., 2018), regularize the parameter updates (Kirkpatrick et al., 2017), or learn a generative model (Kemker and Kanan, 2018). However, in these studies, incremental learning typically starts from scratch, and usually performs worse than a regular model that is trained with all available classes together since it needs to learned a good representation while dealing with catastrophic forgetting.

Incremental few-shot learning is also known as low-shot learning. To leverage a good representation, Hariharan and Girshick (2017); Wang et al. (2018); Gidaris and Komodakis (2018) start off with a pre-trained network on a set of base classes, and tries to augment the classifier with a batch of new classes that has not been seen during training. Hariharan and Girshick (2017) propose the squared gradient magnitude loss, which makes the learned classifier from the low-shot examples have a smaller gradient value when learning on all examples. Wang et al. (2018) propose the prototypical matching networks, a combination of prototypical network and matching network. The paper also adds hallucination, which generates new examples. Gidaris and Komodakis (2018) propose an attention based model which generates weights for novel categories. They also promote the use of cosine similarity between feature representations and weight vectors to classify images.

In contrast, during each few-shot episode, we directly learn a classifier network that is randomly initialized and solved till convergence, unlike Gidaris and Komodakis (2018) which directly output the prediction. Since the model cannot see base class data within the support set of each few-shot learning episode, it is challenging to learn a classifier that jointly classifies both base and novel categories. Towards this end, we propose to add a learned regularizer, which is predicted by a meta-network, the “attention attractor network”. The network is learned by differentiating through few-shot learning optimization iterations. We found that using an iterative solver with the learned regularizer significantly improves the classifier model on the task of incremental few-shot learning.

3 Model

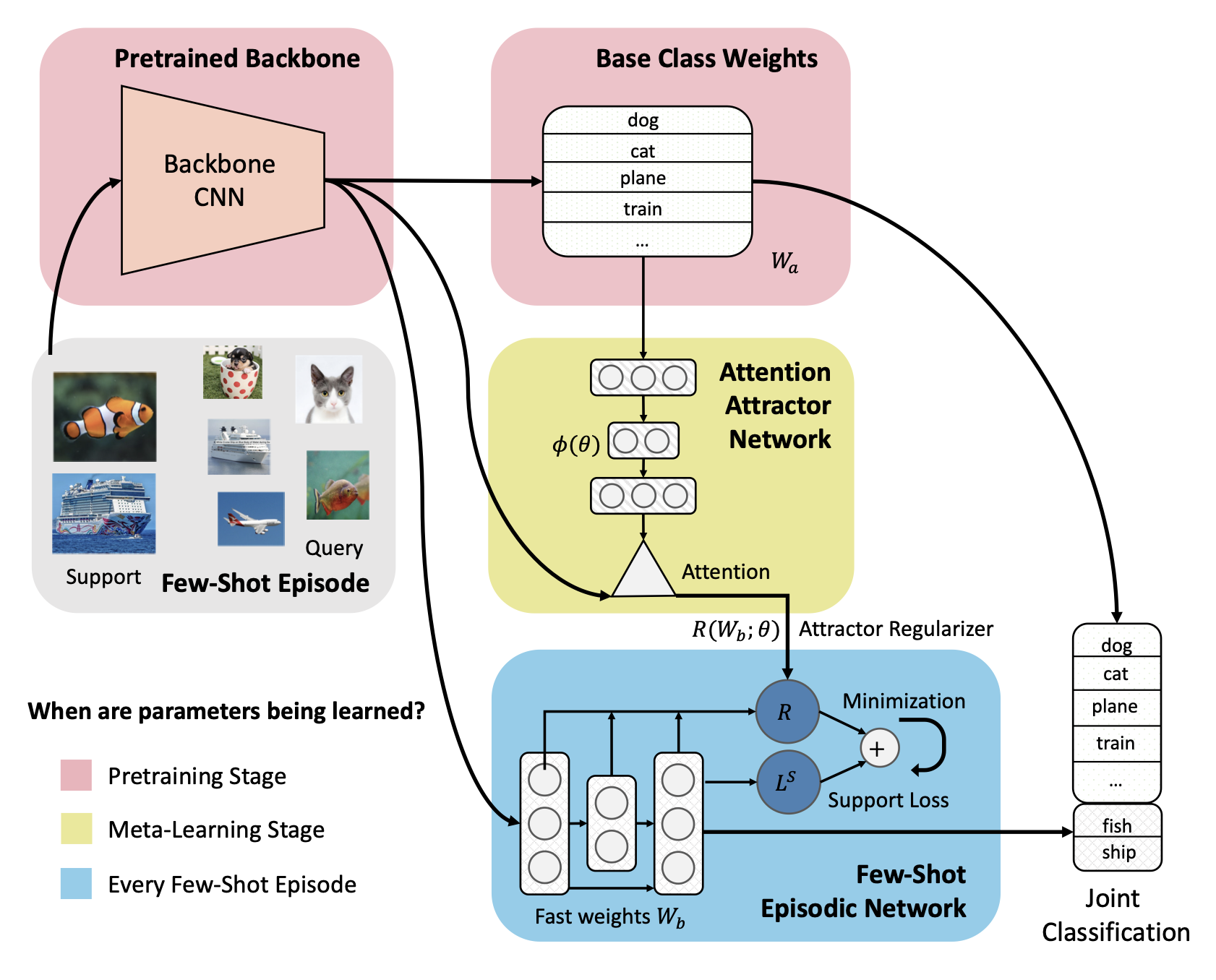

In this section, we first define the setup of incremental few-shot learning, and then we introduce our new model, the Attention Attractor Network, which attends to the set of base classes according to the few-shot training data by using the attractor regularizing term. Figure 1 illustrates the high-level model diagram of our method.

3.1 Incremental Few-Shot Learning

The outline of our meta-learning approach to incremental few-shot learning is: (1) We learn a fixed feature representation and a classifier on a set of base classes; (2) In each training and testing episode we train a novel-class classifier with our meta-learned regularizer; (3) We optimize our meta-learned regularizer on combined novel and base classes classification, adapting it to perform well in conjunction with the base classifier. Details of these stages follow.

Pretraining Stage:

We learn a base model for the regular supervised classification task on dataset where is the -th example from dataset and its labeled class . The purpose of this stage is to learn both a good base classifier and a good representation. The parameters of the base classifier are learned in this stage and will be fixed after pretraining. We denote the parameters of the top fully connected layer of the base classifier where is the dimension of our learned representation.

Incremental Few-Shot Episodes:

A few-shot dataset is presented, from which we can sample few-shot learning episodes . Note that this can be the same data source as the pretraining dataset , but sampled episodically. For each -shot -way episode, there are novel classes disjoint from the base classes. Each novel class has and images from the support set and the query set respectively. Therefore, we have where . and can be regarded as this episodes training and validation sets. Each episode we learn a classifier on the support set whose learnable parameters are called the fast weights as they are only used during this episode. To evaluate the performance on a joint prediction of both base and novel classes, i.e., a -way classification, a mini-batch sampled from is also added to to form . This means that the learning algorithm, which only has access to samples from the novel classes , is evaluated on the joint query set .

Meta-Learning Stage:

In meta-training, we iteratively sample few-shot episodes and try to learn the meta-parameters in order to minimize the joint prediction loss on . In particular, we design a regularizer such that the fast weights are learned via minimizing the loss where is typically cross-entropy loss for few-shot classification. The meta-learner tries to learn meta-parameters such that the optimal fast weights w.r.t. the above loss function performs well on . In our model, meta-parameters are encapsulated in our attention attractor network, which produces regularizers for the fast weights in the few-shot learning objective.

Joint Prediction on Base and Novel Classes:

We now introduce the details of our joint prediction framework performed in each few-shot episode. First, we construct an episodic classifier, e.g., a logistic regression (LR) model or a multi-layer perceptron (MLP), which takes the learned image features as inputs and classifies them according to the few-shot classes.

During training on the support set , we learn the fast weights via minimizing the following regularized cross-entropy objective, which we call the episodic objective:

| (1) |

This is a general formulation and the specific functional form of the regularization term will be specified later. The predicted output is obtained via, , where is our classification network and is the fast weights in the network. In the case of LR, is a linear model: . can also be an MLP for more expressive power.

During testing on the query set , in order to predict both base and novel classes, we directly augment the softmax with the fixed base class weights , , where are the optimal parameters that minimize the regularized classification objective in Eq. (1).

3.2 Attention Attractor Networks

Directly learning the few-shot episode, e.g., by setting to be zero or simple weight decay, can cause catastrophic forgetting on the base classes. This is because which is trained to maximize the correct novel class probability can dominate the base classes in the joint prediction. In this section, we introduce the Attention Attractor Network to address this problem. The key feature of our attractor network is the regularization term :

| (2) |

where is the so-called attractor and is the -th column of . This sum of squared Mahalanobis distances from the attractors adds a bias to the learning signal arriving solely from novel classes. Note that for a classifier such as an MLP, one can extend this regularization term in a layer-wise manner. Specifically, one can have separate attractors per layer, and the number of attractors equals the number of output dimension of that layer.

To ensure that the model performs well on base classes, the attractors must contain some information about examples from base classes. Since we can not directly access these base examples, we propose to use the slow weights to encode such information. Specifically, each base class has a learned attractor vector stored in the memory matrix . It is computed as, , where is a MLP of which the learnable parameters are . For each novel class its classifier is regularized towards its attractor which is a weighted sum of vectors. Intuitively the weighting is an attention mechanism where each novel class attends to the base classes according to the level of interference, i.e. how prediction of new class causes the forgetting of base class .

For each class in the support set, we compute the cosine similarity between the average representation of the class and base weights then normalize using a softmax function

| (3) |

where is the cosine similarity function, are the representations of the inputs in the support set and is a learnable temperature scalar. encodes a normalized pairwise attention matrix between the novel classes and the base classes. The attention vector is then used to compute a linear weighted sum of entries in the memory matrix , , where is an embedding vector and serves as a bias for the attractor.

Our design takes inspiration from attractor networks (Mozer, 2009; Zemel and Mozer, 2001), where for each base class one learns an “attractor” that stores the relevant memory regarding that class. We call our full model “dynamic attractors” as they may vary with each episode even after meta-learning. In contrast if we only have the bias term , i.e. a single attractor which is shared by all novel classes, it will not change after meta-learning from one episode to the other. We call this model variant the “static attractor”.

In summary, our meta parameters include , , and , which is on the same scale as as the number of paramters in . It is important to note that is convex w.r.t. . Therefore, if we use the LR model as the classifier, the overall training objective on episodes in Eq. (1) is convex which implies that the optimum is guaranteed to be unique and achievable. Here we emphasize that the optimal parameters are functions of parameters and few-shot samples .

During meta-learning, are updated to minimize an expected loss of the query set which contains both base and novel classes, averaging over all few-shot learning episodes,

| (4) |

where the predicted class is .

3.3 Learning via Recurrent Back-Propagation

As there is no closed-form solution to the episodic objective (the optimization problem in Eq. 1), in each episode we need to minimize to obtain through an iterative optimizer. The question is how to efficiently compute , i.e., back-propagating through the optimization. One option is to unroll the iterative optimization process in the computation graph and use back-propagation through time (BPTT) (Werbos, 1990). However, the number of iterations for a gradient-based optimizer to converge can be on the order of thousands, and BPTT can be computationally prohibitive. Another way is to use the truncated BPTT (Williams and Peng, 1990) (T-BPTT) which optimizes for steps of gradient-based optimization, and is commonly used in meta-learning problems. However, when is small the training objective could be significantly biased.

Alternatively, the recurrent back-propagation (RBP) algorithm (Almeida, 1987; Pineda, 1987; Liao et al., 2018) allows us to back-propagate through the fixed point efficiently without unrolling the computation graph and storing intermediate activations. Consider a vanilla gradient descent process on with step size . The difference between two steps can be written as , where . Since is identically zero as a function of , using the implicit function theorem we have , where denotes the Jacobian matrix of the mapping evaluated at . Algorithm 1 outlines the key steps for learning the episodic objective using RBP in the incremental few-shot learning setting. Note that the RBP algorithm implicitly inverts by computing the matrix inverse vector product, and has the same time complexity compared to truncated BPTT given the same number of unrolled steps, but meanwhile RBP does not have to store intermediate activations.

Damped Neumann RBP

To compute the matrix-inverse vector product , Liao et al. (2018) propose to use the Neumann series: . Note that can be computed by standard back-propagation. However, directly applying the Neumann RBP algorithm sometimes leads to numerical instability. Therefore, we propose to add a damping term to . This results in the following update: . In practice, we found the damping term with helps alleviate the issue significantly.

4 Experiments

We experiment on two few-shot classification datasets, mini-ImageNet and tiered-ImageNet. Both are subsets of ImageNet (Russakovsky et al., 2015), with images sizes reduced to pixels. We also modified the datasets to accommodate the incremental few-shot learning settings. 111Code released at: https://github.com/renmengye/inc-few-shot-attractor-public

4.1 Datasets

-

tiered-ImageNet Proposed by Ren et al. (2018), tiered-ImageNet is a larger subset of ILSVRC-12. It features a categorical split among training, validation, and testing subsets. The categorical split means that classes that belong to the same high-level category, e.g. “working dog” and ”terrier” or some other dog breed, are not split between training, validation and test. This is a harder task, but one that more strictly evaluates generalization to new classes. It is also an order of magnitude larger than mini-ImageNet.

| Method | Few-shot learner | Episodic objective | Attention mechanism |

|---|---|---|---|

| Imprint (Qi et al., 2018) | Prototypes | N/A | N/A |

| LwoF (Gidaris and Komodakis, 2018) | Prototypes + base classes | N/A | Attention on base classes |

| Ours | A fully trained classifier | Cross entropy on novel classes | Attention on learned attractors |

| Model | 1-shot | 5-shot | ||

|---|---|---|---|---|

| Acc. | Acc. | |||

| ProtoNet | 42.73 0.15 | -20.21 | 57.05 0.10 | -31.72 |

| Imprint | 41.10 0.20 | -22.49 | 44.68 0.23 | -27.68 |

| LwoF | 52.37 0.20 | -13.65 | 59.90 0.20 | -14.18 |

| Ours | 54.95 0.30 | -11.84 | 63.04 0.30 | -10.66 |

| Model | 1-shot | 5-shot | ||

|---|---|---|---|---|

| Acc. | Acc. | |||

| ProtoNet | 30.04 0.21 | -29.54 | 41.38 0.28 | -26.39 |

| Imprint | 39.13 0.15 | -22.26 | 53.60 0.18 | -16.35 |

| LwoF | 52.40 0.33 | -8.27 | 62.63 0.31 | -6.72 |

| Ours | 56.11 0.33 | -6.11 | 65.52 0.31 | -4.48 |

average decrease in acc. caused by joint prediction within base and novel classes ()

represents higher (lower) is better.

4.2 Experiment setup

We use a standard ResNet backbone (He et al., 2016) to learn the feature representation through supervised training. For mini-ImageNet experiments, we follow Mishra et al. (2018) and use a modified version of ResNet-10. For tiered-ImageNet, we use the standard ResNet-18 (He et al., 2016), but replace all batch normalization (Ioffe and Szegedy, 2015) layers with group normalization (Wu and He, 2018), as there is a large distributional shift from training to testing in tiered-ImageNet due to categorical splits. We used standard data augmentation, with random crops and horizonal flips. We use the same pretrained checkpoint as the starting point for meta-learning.

In the meta-learning stage as well as the final evaluation, we sample a few-shot episode from the , together with a regular mini-batch from the . The base class images are added to the query set of the few-shot episode. The base and novel classes are maintained in equal proportion in our experiments. For all the experiments, we consider 5-way classification with 1 or 5 support examples (i.e. shots). In the experiments, we use a query set of size 252 =50.

We use L-BFGS (Zhu et al., 1997) to solve the inner loop of our models to make sure converges. We use the ADAM (Kingma and Ba, 2015) optimizer for meta-learning with a learning rate of 1e-3, which decays by a factor of after 4,000 steps, for a total of 8,000 steps. We fix recurrent backpropagation to 20 iterations and .

We study two variants of the classifier network. The first is a logistic regression model with a single weight matrix . The second is a 2-layer fully connected MLP model with 40 hidden units in the middle and non-linearity. To make training more efficient, we also add a shortcut connection in our MLP, which directly links the input to the output. In the second stage of training, we keep all backbone weights frozen and only train the meta-parameters .

4.3 Evaluation metrics

We consider the following evaluation metrics: 1) overall accuracy on individual query sets and the joint query set (“Base”, “Novel”, and “Both”); and 2) decrease in performance caused by joint prediction within the base and novel classes, considered separately (“” and “”). Finally we take the average as a key measure of the overall decrease in accuracy.

4.4 Comparisons

We implemented and compared to three methods. First, we adapted Prototypical Networks (Snell et al., 2017) to incremental few-shot settings. For each base class we store a base representation, which is the average representation (prototype) over all images belonging to the base class. During the few-shot learning stage, we again average the representation of the few-shot classes and add them to the bank of base representations. Finally, we retrieve the nearest neighbor by comparing the representation of a test image with entries in the representation store. In summary, both and are stored as the average representation of all images seen so far that belong to a certain class. We also compare to the following methods:

-

Weights Imprinting (“Imprint”) (Qi et al., 2018): the base weights are learned regularly through supervised pre-training, and are computed using prototypical averaging.

-

Learning without Forgetting (“LwoF”) (Gidaris and Komodakis, 2018): Similar to (Qi et al., 2018), are computed using prototypical averaging. In addition, is finetuned during episodic meta-learning. We implemented the most advanced variants proposed in the paper, which involves a class-wise attention mechanism. This model is the previous state-of-the-art method on incremental few-shot learning, and has better performance compared to other low-shot models (Wang et al., 2018; Hariharan and Girshick, 2017).

4.5 Results

We first evaluate our vanilla approach on the standard few-shot classification benchmark where no base classes are present in the query set. Our vanilla model consists of a pretrained CNN and a single-layer logistic regression with weight decay learned from scratch; this model performs on-par with other competitive meta-learning approaches (1-shot 55.40 0.51, 5-shot 70.17 0.46). Note that our model uses the same backbone architecture as (Mishra et al., 2018) and (Gidaris and Komodakis, 2018), and is directly comparable with their results. Similar findings of strong results using simple logistic regression on few-shot classification benchmarks are also recently reported in (Chen et al., 2019). Our full model has similar performance as the vanilla model on pure few-shot benchmarks, and the full table is available in Supp. Materials.

Next, we compare our models to other methods on incremental few-shot learning benchmarks in Tables 3 and 3. On both benchmarks, our best performing model shows a significant margin over the prior works that predict the prototype representation without using an iterative optimization (Snell et al., 2017; Qi et al., 2018; Gidaris and Komodakis, 2018).

| 1-shot | 5-shot | |||

|---|---|---|---|---|

| Acc. | Acc. | |||

| LR | 52.74 0.24 | -13.95 | 60.34 0.20 | -13.60 |

| LR +S | 53.63 0.30 | -12.53 | 62.50 0.30 | -11.29 |

| LR +A | 55.31 0.32 | -11.72 | 63.00 0.29 | -10.80 |

| MLP | 49.36 0.29 | -16.78 | 60.85 0.29 | -12.62 |

| MLP +S | 54.46 0.31 | -11.74 | 62.79 0.31 | -10.77 |

| MLP +A | 54.95 0.30 | -11.84 | 63.04 0.30 | -10.66 |

| 1-shot | 5-shot | |||

|---|---|---|---|---|

| Acc. | Acc. | |||

| LR | 48.84 0.23 | -10.44 | 62.08 0.20 | -8.00 |

| LR +S | 55.36 0.32 | -6.88 | 65.53 0.30 | -4.68 |

| LR +A | 55.98 0.32 | -6.07 | 65.58 0.29 | -4.39 |

| MLP | 41.22 0.35 | -10.61 | 62.70 0.31 | -7.44 |

| MLP +S | 56.16 0.32 | -6.28 | 65.80 0.31 | -4.58 |

| MLP +A | 56.11 0.33 | 6.11 | 65.52 0.31 | -4.48 |

“+S” stands for static attractors, and “+A” for attention attractors.

4.6 Ablation studies

To understand the effectiveness of each part of the proposed model, we consider the following variants:

-

Vanilla (“LR, MLP”) optimizes a logistic regression or an MLP network at each few-shot episode, with a weight decay regularizer.

-

Static attractor (“+S”) learns a fixed attractor center and attractor slope for all classes.

-

Attention attractor (“+A”) learns the full attention attractor model. For MLP models, the weights below the final layer are controlled by attractors predicted by the average representation across all the episodes. is an MLP with one hidden layer of 50 units.

Tables 5 and 5 shows the ablation experiment results. In all cases, the learned regularization function shows better performance than a manually set weight decay constant on the classifier network, in terms of both jointly predicting base and novel classes, as well as less degradation from individual prediction. On mini-ImageNet, our attention attractors have a clear advantage over static attractors.

Formulating the classifier as an MLP network is slightly better than the linear models in our experiments. Although the final performance is similar, our RBP-based algorithm have the flexibility of adding the fast episodic model with more capacity. Unlike Bertinetto et al. (2018), we do not rely on an analytic form of the gradients of the optimization process.

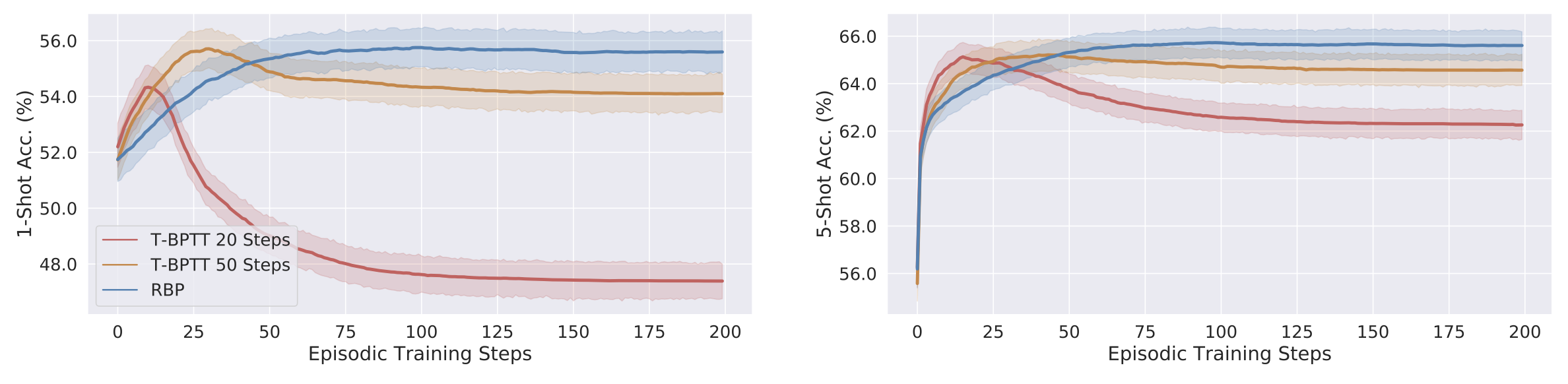

Comparison to truncated BPTT (T-BPTT)

An alternative way to learn the regularizer is to unroll the inner optimization for a fixed number of steps in a differentiable computation graph, and then back-propagate through time. Truncated BPTT is a popular learning algorithm in many recent meta-learning approaches (Andrychowicz et al., 2016; Ravi and Larochelle, 2017; Finn et al., 2017; Sprechmann et al., 2018; Balaji et al., 2018). Shown in Figure 2, the performance of T-BPTT learned models are comparable to ours; however, when solved to convergence at test time, the performance of T-BPTT models drops significantly. This is expected as they are only guaranteed to work well for a certain number of steps, and failed to learn a good regularizer. While an early-stopped T-BPTT model can do equally well, in practice it is hard to tell when to stop; whereas for the RBP model, doing the full episodic training is very fast since the number of support examples is small.

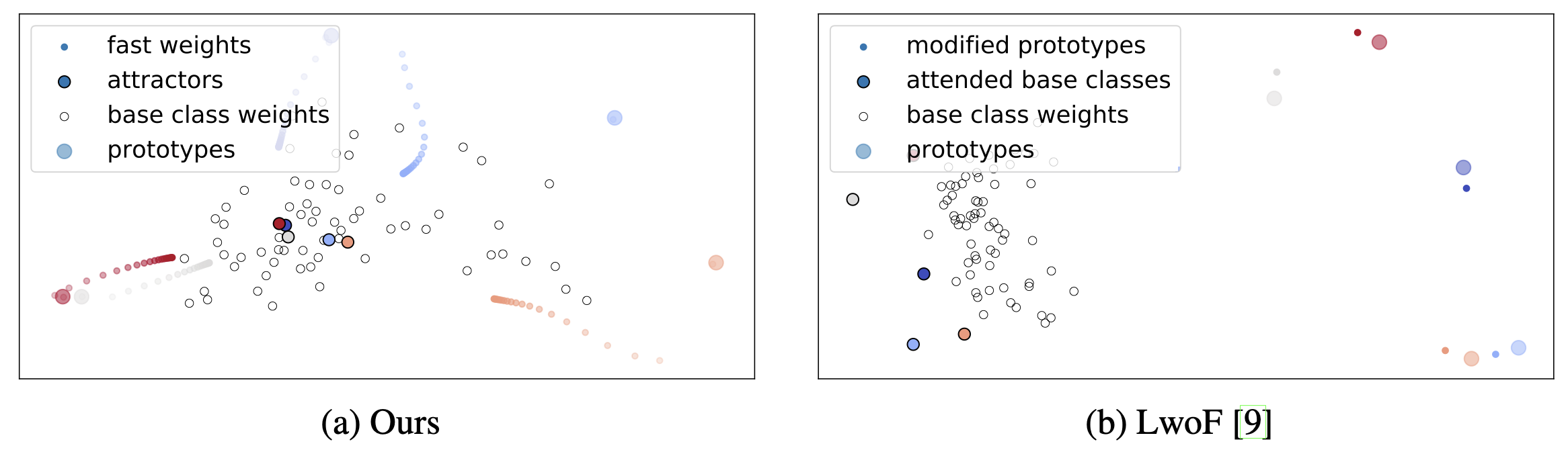

Visualization of attractor dynamics

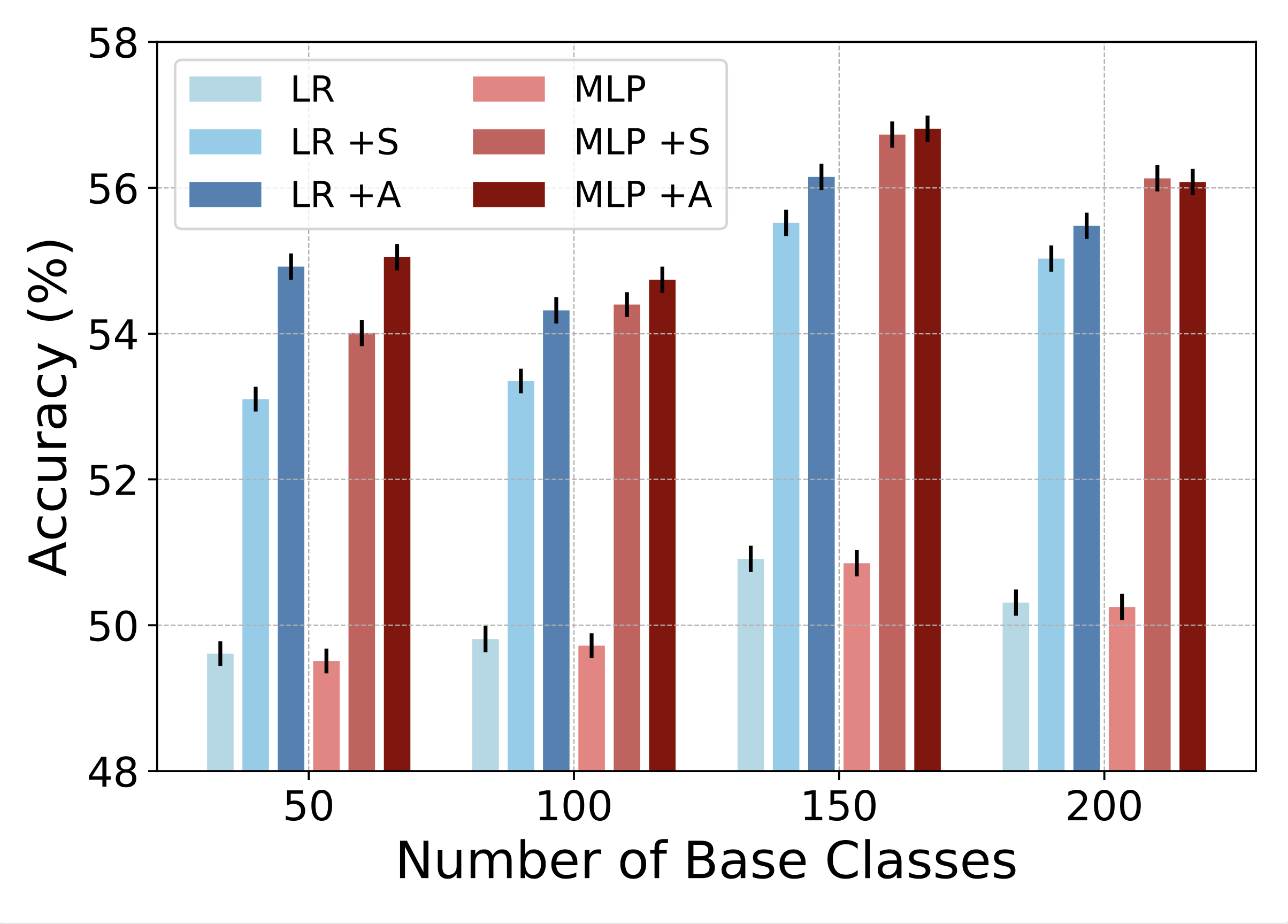

Varying the number of base classes

While the framework proposed in this paper cannot be directly applied on class-incremental continual learning, as there is no module for memory consolidation, we can simulate the continual learning process by varying the number of base classes, to see how the proposed models are affected by different stages of continual learning. Figure LABEL:fig:snapshot shows that the learned regularizers consistently improve over baselines with weight decay only. The overall accuracy increases from 50 to 150 classes due to better representations on the backbone network, and drops at 200 classes due to a more challenging classification task.

5 Conclusion

Incremental few-shot learning, the ability to jointly predict based on a set of pre-defined concepts as well as additional novel concepts, is an important step towards making machine learning models more flexible and usable in everyday life. In this work, we propose an attention attractor model, which regulates a per-episode training objective by attending to the set of base classes. We show that our iterative model that solves the few-shot objective till convergence is better than baselines that do one-step inference, and that recurrent back-propagation is an effective and modular tool for learning in a general meta-learning setting, whereas truncated back-propagation through time fails to learn functions that converge well. Future directions of this work include sequential iterative learning of few-shot novel concepts, and hierarchical memory organization.

Acknowledgment

Supported by NSERC and the Intelligence Advanced Research Projects Activity (IARPA) via Department of Interior/Interior Business Center (DoI/IBC) contract number D16PC00003. The U.S. Government is authorized to reproduce and distribute reprints for Governmental purposes notwithstanding any copyright annotation thereon. Disclaimer: The views and conclusions contained herein are those of the authors and should not be interpreted as necessarily representing the official policies or endorsements, either expressed or implied, of IARPA, DoI/IBC, or the U.S. Government.

References

- A learning rule for asynchronous perceptrons with feedback in a combinatorial environment.. In Proceedings of the 1st International Conference on Neural Networks, Vol. 2, pp. 609–618. Cited by: §1, §2, §3.3.

- Learning to learn by gradient descent by gradient descent. In Advances in Neural Information Processing Systems 29 (NIPS), Cited by: §4.6.

- MetaReg: towards domain generalization using meta-regularization. In Advances in Neural Information Processing Systems 31: Annual Conference on Neural Information Processing Systems (NeurIPS), Cited by: §4.6.

- Meta-learning with differentiable closed-form solvers. CoRR abs/1805.08136. Cited by: Table 6, §2, §4.6.

- End-to-end incremental learning. In European Conference on Computer Vision (ECCV), Cited by: §2.

- A closer look at few-shot classification. In Proceedings of the 7th International Conference on Learning Representations (ICLR), Cited by: §4.5.

- Model-agnostic meta-learning for fast adaptation of deep networks. In Proceedings of the 34th International Conference on Machine Learning (ICML), Cited by: Table 6, §2, §4.6.

- Few-shot learning with graph neural networks. In Proceedings of the 6th International Conference on Learning Representations (ICLR), Cited by: §2.

- Dynamic few-shot visual learning without forgetting. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Cited by: Table 6, Appendix B, §D.1, §D.2, §1, §2, §2, Figure 3, 2nd item, §4.5, §4.5, §4.6, Table 1.

- Low-shot visual recognition by shrinking and hallucinating features. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Cited by: §2, 2nd item.

- Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 770–778. Cited by: §4.2.

- Batch normalization: accelerating deep network training by reducing internal covariate shift. In Proceedings of the 32nd International Conference on Machine Learning (ICML), Cited by: §4.2.

- FearNet: brain-inspired model for incremental learning. In Proceedings of 6th International Conference on Learning Representations (ICLR), Cited by: §2.

- Adam: a method for stochastic optimization. In Proceedings of the 3rd International Conference on Learning Representations (ICLR), Cited by: §4.2.

- Overcoming catastrophic forgetting in neural networks. Proceedings of the national academy of sciences (PNAS), pp. 201611835. Cited by: §2.

- Siamese neural networks for one-shot image recognition. In ICML Deep Learning Workshop, Vol. 2. Cited by: §1, §2.

- One shot learning of simple visual concepts. In Proceedings of the 33th Annual Meeting of the Cognitive Science Society (CogSci), Cited by: §2.

- Reviving and improving recurrent back-propagation. In Proceedings of the 35th International Conference on Machine Learning (ICML), Cited by: §1, §2, §3.3, §3.3.

- Why there are complementary learning systems in the hippocampus and neocortex: insights from the successes and failures of connectionist models of learning and memory.. Psychological review 102 (3), pp. 419. Cited by: §2.

- Catastrophic interference in connectionist networks: the sequential learning problem. In Psychology of learning and motivation, Vol. 24, pp. 109–165. Cited by: §1, §2.

- A simple neural attentive meta-learner. In Proceedings of the 6th International Conference on Learning Representations (ICLR), Cited by: Table 6, §2, §4.2, §4.5.

- Attractor networks. The Oxford companion to consciousness, pp. 86–89. Cited by: §3.2.

- Meta-neural networks that learn by learning. In Proceedings of the IEEE International Joint Conference on Neural Networks (IJCNN), Cited by: §2.

- Variational continual learning. In Proceedings of the 6th International Conference on Learning Representations (ICLR), Cited by: §2.

- Generalization of back-propagation to recurrent neural networks. Physical review letters 59 (19), pp. 2229. Cited by: §1, §2, §3.3.

- Low-shot learning with imprinted weights. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Cited by: 1st item, 2nd item, §4.5, Table 1.

- Optimization as a model for few-shot learning. In Proceedings of the 5th International Conference on Learning Representations (ICLR), Cited by: Table 6, §2, 1st item, §4.6.

- ICaRL: incremental classifier and representation learning. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Cited by: §2.

- Meta-learning for semi-supervised few-shot classification. In Proceedings of 6th International Conference on Learning Representations (ICLR), Cited by: §1, §2, 2nd item.

- Imagenet large scale visual recognition challenge. International Journal of Computer Vision 115 (3), pp. 211–252. Cited by: §1, §4.

- One-shot learning with memory-augmented neural networks. In Proceedings of the 33rd International Conference on Machine Learning (ICML), Cited by: §2.

- Evolutionary principles in self-referential learning, or on learning how to learn: the meta-meta-… hook. Diplomarbeit, Technische Universität München, München. Cited by: §2.

- Prototypical networks for few-shot learning. In Advances in Neural Information Processing Systems 30 (NIPS), Cited by: Table 6, §1, §2, §4.4, §4.5.

- Memory-based parameter adaptation. In Proceedings of 6th International Conference on Learning Representations (ICLR), Cited by: §2, §4.6.

- Learning to compare: relation network for few-shot learning. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Cited by: Table 6.

- Lifelong learning algorithms. In Learning to learn, pp. 181–209. Cited by: §2.

- Matching networks for one shot learning. In Advances in Neural Information Processing Systems 29 (NIPS), Cited by: Table 6, §1, §1, §2, 1st item.

- Low-shot learning from imaginary data. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Cited by: §2, 2nd item.

- Backpropagation through time: what it does and how to do it. Proceedings of the IEEE 78 (10), pp. 1550–1560. Cited by: §3.3.

- An efficient gradient-based algorithm for on-line training of recurrent network trajectories. Neural computation 2 (4), pp. 490–501. Cited by: §3.3.

- Understanding short-horizon bias in stochastic meta-optimization. In Proceedings of the 6th International Conference on Learning Representations (ICLR), Cited by: §2.

- Group normalization. In European Conference on Computer Vision (ECCV), Cited by: §4.2.

- Localist attractor networks. Neural Computation 13 (5), pp. 1045–1064. Cited by: §1, §3.2.

- Algorithm 778: l-bfgs-b: fortran subroutines for large-scale bound-constrained optimization. ACM Transactions on Mathematical Software (TOMS) 23 (4), pp. 550–560. Cited by: §4.2.

Appendix A Regular Few-Shot Classification

We include standard 5-way few-shot classification results in Table 6. As mentioned in the main text, a simple logistic regression model can achieve competitive performance on few-shot classification using pretrained features. Our full model shows similar performance on regular few-shot classification. This confirms that the learned regularizer is mainly solving the interference problem between the base and novel classes.

| Model | Backbone | 1-shot | 5-shot |

|---|---|---|---|

| MatchingNets (Vinyals et al., 2016) | C64 | 43.60 | 55.30 |

| Meta-LSTM (Ravi and Larochelle, 2017) | C32 | 43.40 0.77 | 60.20 0.71 |

| MAML (Finn et al., 2017) | C64 | 48.70 1.84 | 63.10 0.92 |

| RelationNet (Sung et al., 2018) | C64 | 50.44 0.82 | 65.32 0.70 |

| R2-D2 (Bertinetto et al., 2018) | C256 | 51.20 0.60 | 68.20 0.60 |

| SNAIL (Mishra et al., 2018) | ResNet | 55.71 0.99 | 68.88 0.92 |

| ProtoNet (Snell et al., 2017) | C64 | 49.42 0.78 | 68.20 0.66 |

| ProtoNet* (Snell et al., 2017) | ResNet | 50.09 0.41 | 70.76 0.19 |

| LwoF (Gidaris and Komodakis, 2018) | ResNet | 55.45 0.89 | 70.92 0.35 |

| LR | ResNet | 55.40 0.51 | 70.17 0.46 |

| Ours Full | ResNet | 55.75 0.51 | 70.14 0.44 |

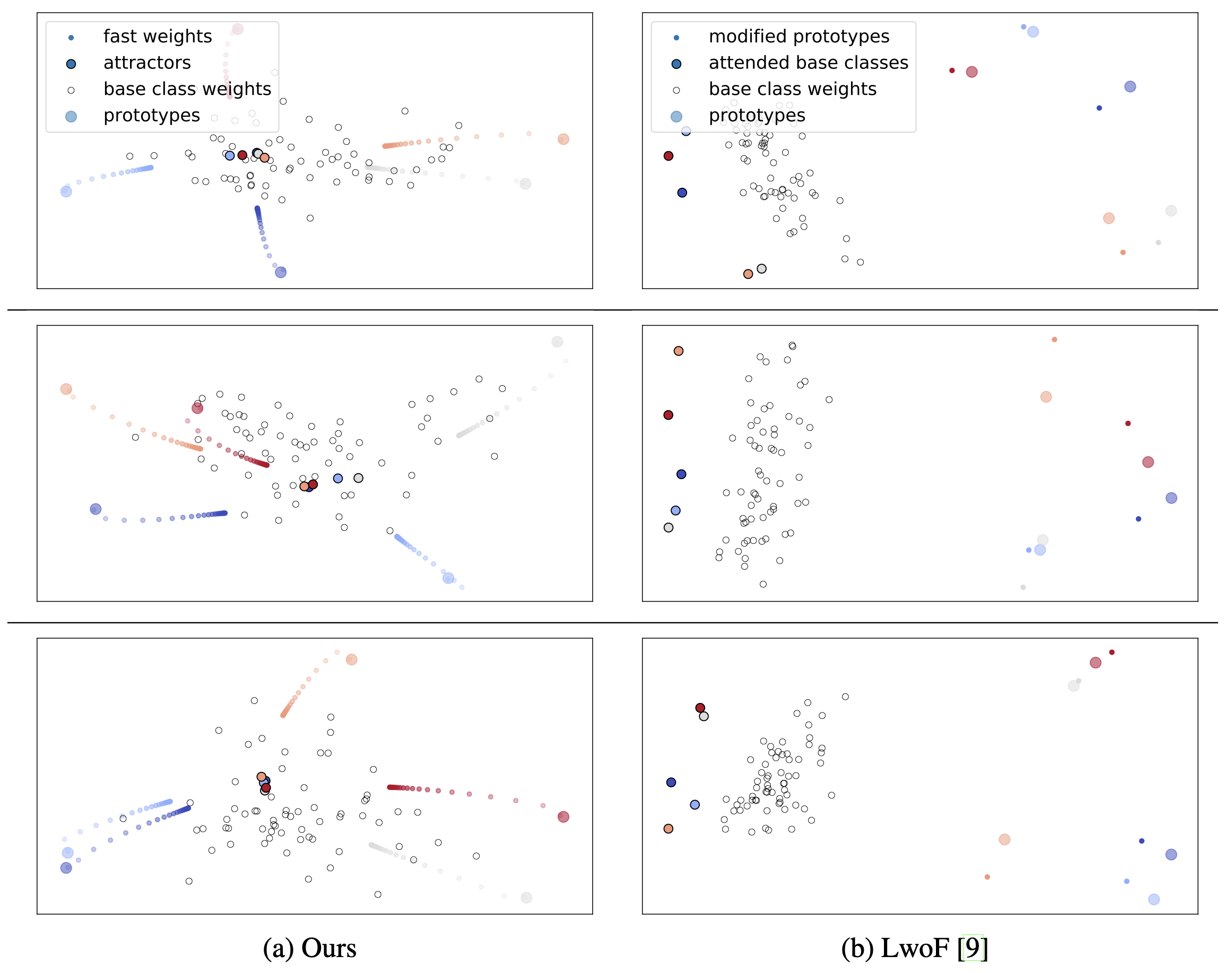

Appendix B Visualization of Few-Shot Episodes

We include more visualization of few-shot episodes in Figure 5, highlighting the differences between our method and “Dynamic Few-Shot Learning without Forgetting” (Gidaris and Komodakis, 2018).

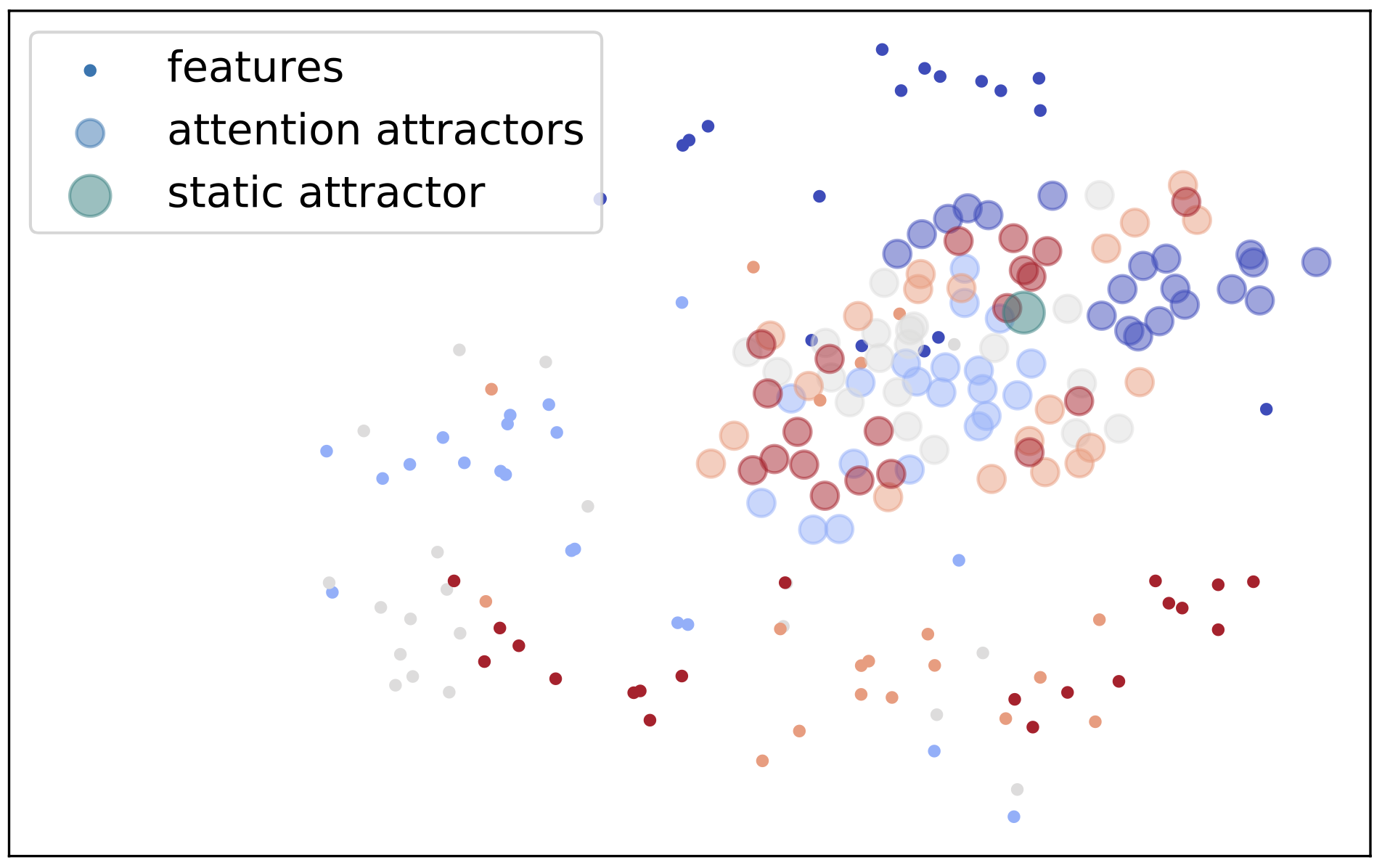

Appendix C Visualization of Attention Attractors

To further understand the attractor mechanism, we picked 5 semantic classes in mini-ImageNet and visualized their the attention attractors across 20 episodes, shown in Figure 6. The attractors roughly form semantic clusters, whereas the static attractor stays in the center of all attractors.

| 1-shot | 5-shot | |||||||

|---|---|---|---|---|---|---|---|---|

| Acc. | Acc. | |||||||

| LR | 52.74 0.24 | -13.95 | -8.98 | -24.32 | 60.34 0.20 | -13.60 | -10.81 | -15.97 |

| LR +S | 53.63 0.30 | -12.53 | -9.44 | -15.62 | 62.50 0.30 | -11.29 | -13.84 | -8.75 |

| LR +A | 55.31 0.32 | -11.72 | -12.72 | -10.71 | 63.00 0.29 | -10.80 | -13.59 | -8.01 |

| MLP | 49.36 0.29 | -16.78 | -8.95 | -24.61 | 60.85 0.29 | -12.62 | -11.35 | -13.89 |

| MLP +S | 54.46 0.31 | -11.74 | -12.73 | -10.74 | 62.79 0.31 | -10.77 | -12.61 | -8.80 |

| MLP +A | 54.95 0.30 | -11.84 | -12.81 | -10.87 | 63.04 0.30 | -10.66 | -12.55 | -8.77 |

| 1-shot | 5-shot | |||||||

|---|---|---|---|---|---|---|---|---|

| Acc. | Acc. | |||||||

| LR | 48.84 0.23 | -10.44 | -11.65 | -9.24 | 62.08 0.20 | -8.00 | -5.49 | -10.51 |

| LR +S | 55.36 0.32 | -6.88 | -7.21 | -6.55 | 65.53 0.30 | -4.68 | -4.72 | -4.63 |

| LR +A | 55.98 0.32 | -6.07 | -6.64 | -5.51 | 65.58 0.29 | -4.39 | -4.87 | -3.91 |

| MLP | 41.22 0.35 | -10.61 | -11.25 | -9.98 | 62.70 0.31 | -7.44 | -6.05 | -8.82 |

| MLP +S | 56.16 0.32 | -6.28 | -6.83 | -5.73 | 65.80 0.31 | -4.58 | -4.66 | -4.51 |

| MLP +A | 56.11 0.33 | 6.11 | -6.79 | -5.43 | 65.52 0.31 | -4.48 | -4.91 | -4.05 |

Appendix D Dataset Statistics

In this section, we include more details on the datasets we used in our experiments.

| mini-ImageNet | tiered-ImageNet | ||||||

|---|---|---|---|---|---|---|---|

| Classes | Purpose | Split | N. Cls | N. Img | Split | N. Cls | N. Img |

| Base | Train | Train-Train | 64 | 38,400 | Train-A-Train | 200 | 203,751 |

| Val | Train-Val | 64 | 18,748 | Train-A-Val | 200 | 25,460 | |

| Test | Train-Test | 64 | 19,200 | Train-A-Test | 200 | 25,488 | |

| Novel | Train | Train-Train | 64 | 38,400 | Train-B | 151 | 193,996 |

| Val | Val | 16 | 9,600 | Val | 97 | 124,261 | |

| Test | Test | 20 | 12,000 | Test | 160 | 206,209 | |

d.1 Validation and testing splits for base classes

In standard few-shot learning, meta-training, validation, and test set have disjoint sets of object classes. However, in our incremental few-shot learning setting, to evaluate the model performance on the base class predictions, additional splits of validation and test splits of the meta-training set are required. Splits and dataset statistics are listed in Table 9. For mini-ImageNet, Gidaris and Komodakis (2018) released additional images for evaluating training set, namely “Train-Val” and “Train-Test”. For tiered-ImageNet, we split out 20% of the images for validation and testing of the base classes.

d.2 Novel classes

In mini-ImageNet experiments, the same training set is used for both and . In order to pretend that the classes in the few-shot episode are novel, following Gidaris and Komodakis (2018), we masked the base classes in , which contains 64 base classes. In other words, we essentially train for a 59+5 classification task. We found that under this setting, the progress of meta-learning in the second stage is not very significant, since all classes have already been seen before.

In tiered-ImageNet experiments, to emulate the process of learning novel classes during the second stage, we split the training classes into base classes (“Train-A”) with 200 classes and novel classes (“Train-B”) with 151 classes, just for meta-learning purpose. During the first stage the classifier is trained using Train-A-Train data. In each meta-learning episode we sample few-shot examples from the novel classes (Train-B) and a query base set from Train-A-Val.

200 Base Classes (“Train-A”):

n02128757, n02950826, n01694178, n01582220, n03075370, n01531178, n03947888, n03884397, n02883205, n03788195, n04141975, n02992529, n03954731, n03661043, n04606251, n03344393, n01847000, n03032252, n02128385, n04443257, n03394916, n01592084, n02398521, n01748264, n04355338, n02481823, n03146219, n02963159, n02123597, n01675722, n03637318, n04136333, n02002556, n02408429, n02415577, n02787622, n04008634, n02091831, n02488702, n04515003, n04370456, n02093256, n01693334, n02088466, n03495258, n02865351, n01688243, n02093428, n02410509, n02487347, n03249569, n03866082, n04479046, n02093754, n01687978, n04350905, n02488291, n02804610, n02094433, n03481172, n01689811, n04423845, n03476684, n04536866, n01751748, n02028035, n03770439, n04417672, n02988304, n03673027, n02492660, n03840681, n02011460, n03272010, n02089078, n03109150, n03424325, n02002724, n03857828, n02007558, n02096051, n01601694, n04273569, n02018207, n01756291, n04208210, n03447447, n02091467, n02089867, n02089973, n03777754, n04392985, n02125311, n02676566, n02092002, n02051845, n04153751, n02097209, n04376876, n02097298, n04371430, n03461385, n04540053, n04552348, n02097047, n02494079, n03457902, n02403003, n03781244, n02895154, n02422699, n04254680, n02672831, n02483362, n02690373, n02092339, n02879718, n02776631, n04141076, n03710721, n03658185, n01728920, n02009229, n03929855, n03721384, n03773504, n03649909, n04523525, n02088632, n04347754, n02058221, n02091635, n02094258, n01695060, n02486410, n03017168, n02910353, n03594734, n02095570, n03706229, n02791270, n02127052, n02009912, n03467068, n02094114, n03782006, n01558993, n03841143, n02825657, n03110669, n03877845, n02128925, n02091032, n03595614, n01735189, n04081281, n04328186, n03494278, n02841315, n03854065, n03498962, n04141327, n02951585, n02397096, n02123045, n02095889, n01532829, n02981792, n02097130, n04317175, n04311174, n03372029, n04229816, n02802426, n03980874, n02486261, n02006656, n02025239, n03967562, n03089624, n02129165, n01753488, n02124075, n02500267, n03544143, n02687172, n02391049, n02412080, n04118776, n03838899, n01580077, n04589890, n03188531, n03874599, n02843684, n02489166, n01855672, n04483307, n02096177, n02088364.

151 Novel Classes (“Train-B”):

n03720891, n02090379, n03134739, n03584254, n02859443, n03617480, n01677366, n02490219, n02749479, n04044716, n03942813, n02692877, n01534433, n02708093, n03804744, n04162706, n04590129, n04356056, n01729322, n02091134, n03788365, n01739381, n02727426, n02396427, n03527444, n01682714, n03630383, n04591157, n02871525, n02096585, n02093991, n02013706, n04200800, n04090263, n02493793, n03529860, n02088238, n02992211, n03657121, n02492035, n03662601, n04127249, n03197337, n02056570, n04005630, n01537544, n02422106, n02130308, n03187595, n03028079, n02098413, n02098105, n02480855, n02437616, n02123159, n03803284, n02090622, n02012849, n01744401, n06785654, n04192698, n02027492, n02129604, n02090721, n02395406, n02794156, n01860187, n01740131, n02097658, n03220513, n04462240, n01737021, n04346328, n04487394, n03627232, n04023962, n03598930, n03000247, n04009552, n02123394, n01729977, n02037110, n01734418, n02417914, n02979186, n01530575, n03534580, n03447721, n04118538, n02951358, n01749939, n02033041, n04548280, n01755581, n03208938, n04154565, n02927161, n02484975, n03445777, n02840245, n02837789, n02437312, n04266014, n03347037, n04612504, n02497673, n03085013, n02098286, n03692522, n04147183, n01728572, n02483708, n04435653, n02480495, n01742172, n03452741, n03956157, n02667093, n04409515, n02096437, n01685808, n02799071, n02095314, n04325704, n02793495, n03891332, n02782093, n02018795, n03041632, n02097474, n03404251, n01560419, n02093647, n03196217, n03325584, n02493509, n04507155, n03970156, n02088094, n01692333, n01855032, n02017213, n02423022, n03095699, n04086273, n02096294, n03902125, n02892767, n02091244, n02093859, n02389026.